Linux from Novice to Professional Part 1 - System Level.

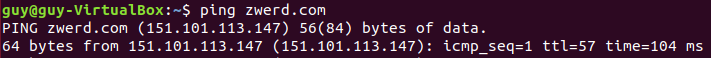

Today morning I received a message from LPI that my certificate will expire in 9 months and so it is time to start studying for the second Linux certification, this will advance me to the third Linux information security certification that I will search for, this certification is divided into two and the two parts are marked by two different codes: 201-450, 202-450

In this post I am going to go over each topic as listed on the LPI website, which means I will go over each tool and present it here, in each chapter I will show how to use these tools to get the same information that the subject of the test should deal with, at the end of each chapter I will present a challenge From most of the commands we learned and challenged you and me how to get the information to succeed.

If you want a brochure that deals with the topic extensively regardless of this post I recommend the booklet by Snow B.V. Her name: The LPIC-2 Exam Prep 6th edition, for version 4.5. This booklet helps me a lot and I recommend going through it at the same time, and yet I will try to elaborate as much as possible in the post here to be ready for the test.

Objective 201-450

Chapter 0

Topic 200: Capacity Planning

Before we start I think is good to memorize way we here to learn linux, just think about world without linux, the power that linux give us and the world is limit less, we can take that technologist and use it to bring new ideas and invention to the world. So at the start I think it is good to take a look of some comedy short video that I found.

In this chapter, we need to address computer hardware issues and how to use Linux to make sure that, such as system resources and resources, it is important to know these concepts because these tools can help us deal with the resource utilization problem, and we may run software only after a long time Since running it, or it is possible that only a certain program after a certain operation will consume all the resources on the computer, in this case we want to know how to look at the resources and check which component utilizes it.

In this way we can know which program is the problematic and which program causes the computer to work slowly and even freeze. Most of the tools we present here are tools that show you how to see what your computer’s CPU is used for, what software is currently working, how many free memory we have, and a few other things to help us understand how the Linux operating system works.

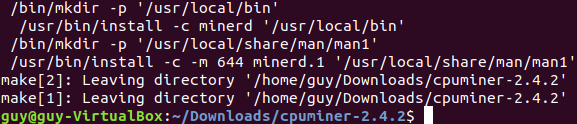

stress

In our case we can start some virtual machine to get ready and learn the commands, but how can we demonstrate some memory issues or CPU’s problems? in that case we can use stress, that command can load up our memory and CPU’s to 100 percentage which can be handy if we want to view such issue with the command that supposed to help us find the program that cause to that issue.

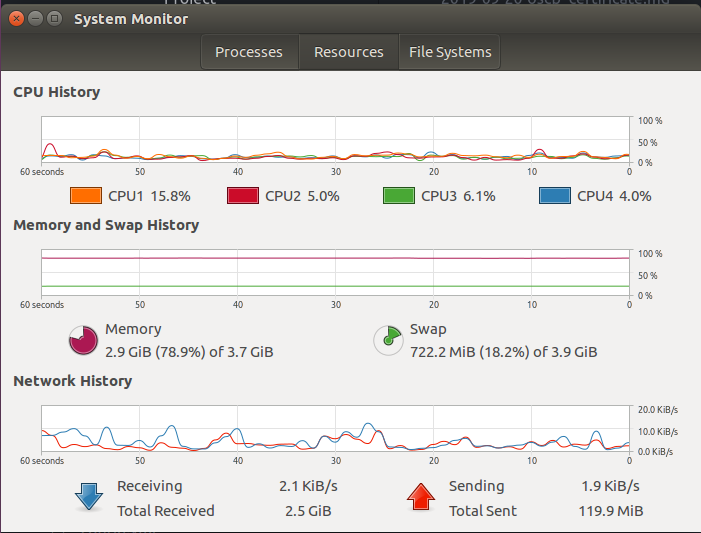

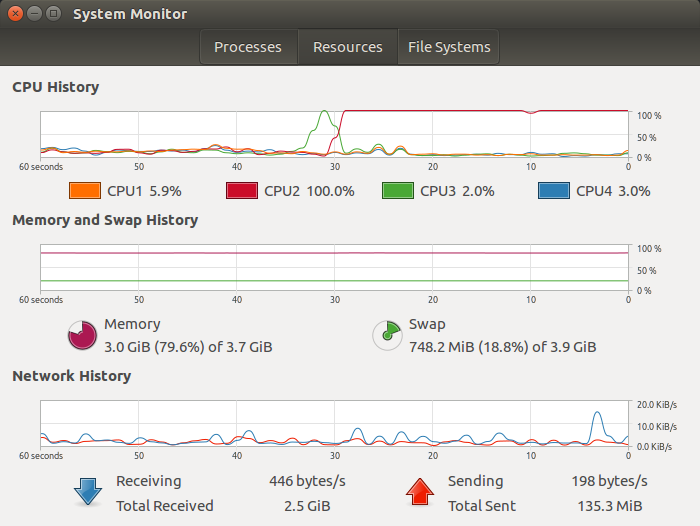

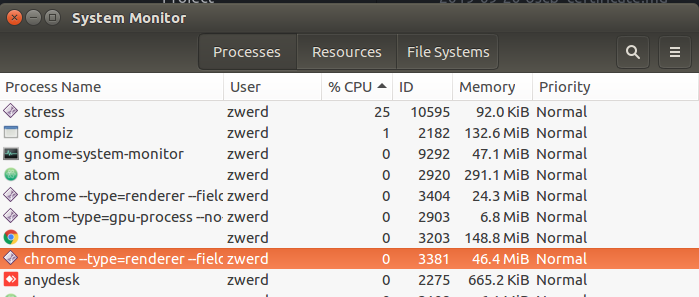

I am use Ubuntu, and like on other system operation, I have system monitor that can help me to view my processes on my linux machine, if some program take more CPU than others I may feel like I have sort of slowness in my computer, so, using the system monitor can be handy to find what is the problem in my case.

If some program utilizes the CPU overly you will see it on system monitor, on the resource the grap will jump up and on processes tab you can find what program utilizes how much from you CPU or memory, let’s run the following command.

1

stress -c 1

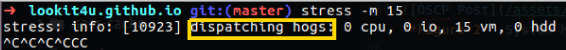

This command will dispatching the hug lol! it utilizes one core of your CPU, in my case I have 4 CPU, so one of them will be loaded and I can see it on my system monitor resource tab.

Figure 2 System Monitor, one CPU are loaded over.

Figure 2 System Monitor, one CPU are loaded over.

If I check out my processes list I will find that the stress program are running and utilizes at least 25% of my all CPU.

Figure 3 Stress program utilizes.

Figure 3 Stress program utilizes.

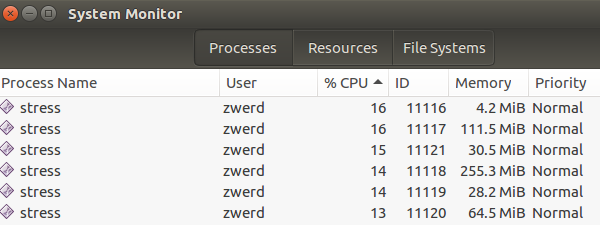

You can also run stress to utilizes the RAM, in that case you specify how much chunk you want to use, every chunk are 256mb, in my case I have 4 giga memory to use so to load it up I can run 15 chunk which is 3840 megabyte in total.

You can see all of my C^ keys because I freak out, my computer was freezing and I can’t do anything but the ctrl+C command, so now after it done I run 6 chunk that will not going to crash my system but at least I will be able to view the RAM used.

Figure 5 Stress the RAM again.

Figure 5 Stress the RAM again.

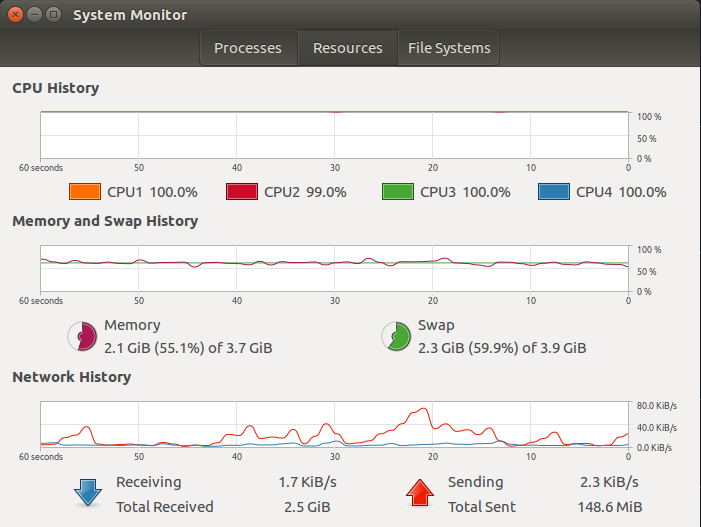

You also can see on the resources that my memory really over load and my RAM is over 55% used and also my swap is over 59%, please remember that the swap is your virtual memory that run on your hard disk, which mean in my case on the hard disk I have 4 giga memory that been used as swap, if you see such of thing this is not normal and maybe the system are loaded, becouse as default the swap memory will never been use unless the regular memory are over and that the swap get to use.

Figure 6 Stress the RAM, in resources.

Figure 6 Stress the RAM, in resources.

You can also use stress to load the system by using that hard drive, just type the following command, that command can affect the io which is the input/output wait and called i/o block, this is a processes queue, if for some reason one process can’t run because the CPU are over loaded, that process will wait on until the CPU can handle it, the worst thing about that is that this process is on uninterruptable sleep mode which mean that you can’t even killed it.

1

stress --hdd 1

But let’s say that you are working at company X, and there you have some linux server without GUI, so in that case you may what to be familiar with the command that can help you to find the problem via terminal.

Top

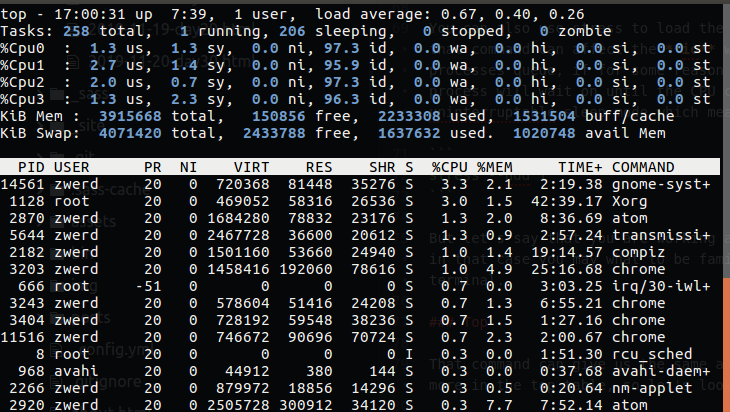

That command can give us the same as the system monitor, you can view the percentage of CPU, RAM and more in the top table, so let’s look at that.

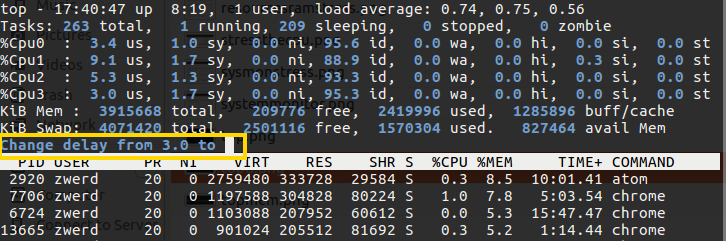

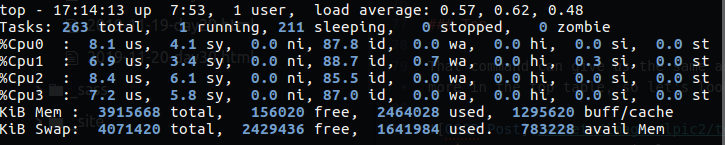

By typing the top commend, as you can see, it bring me table that refresh every 3 second by default, you can change that value by using the top -d 5 command for 5 second, or inside the top screen press d and it will ask you to setup new interval for screen update.

Figure 8 Change interval with d.

Figure 8 Change interval with d.

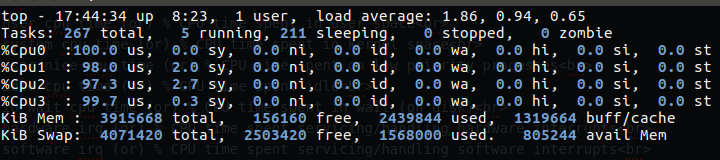

You also can see the CPU in percentage, if you have 1 CPU that mean using full of that will be 100% and you will see this number on the cpu in the top command, if you have 4 CPU than if one of them are on the 100% utilizes, than in CPU field you will see 25%. You can also view every CPU separately by press 1 on your keyboard.

Figure 9 Display every one of my CPU.

Figure 9 Display every one of my CPU.

The information for every field over the CPU are as follow:

us: user cpu time (or) % CPU time spent in user space

sy: system cpu time (or) % CPU time spent in kernel space

ni: user nice cpu time (or) % CPU time spent on low priority processes

id: idle cpu time (or) % CPU time spent idle

wa: io wait cpu time (or) % CPU time spent in wait (on disk)

hi: hardware irq (or) % CPU time spent servicing/handling hardware interrupts

si: software irq (or) % CPU time spent servicing/handling software interrupts

st: steal time - - % CPU time in involuntary wait by virtual cpu while hypervisor is servicing another processor (or) % CPU time stolen from a virtual machine

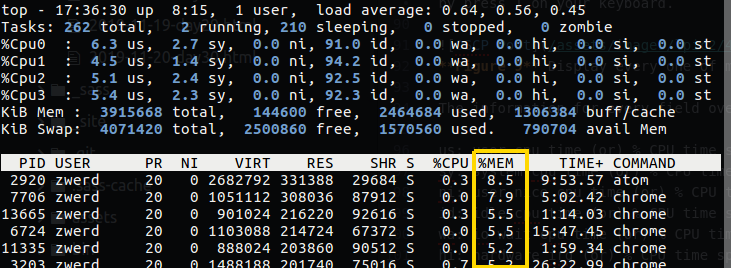

By default the CPU tab are sort, which mean that you can see what program take more CPU than others, if you want to change this sort and resort it by memory, you can press on shift+> that will sort the right column which is the RAM.

If I will run stress now for check the cpu on the top, my cpu can cam up to 100% only if I type the command as follow:

1

stress -c 4

You can see that my CPU is nearly a hundred percent, so this is mean that if I done stress -c 1 it only load just one CPU core. The same will be in the case of memory as we saw earlier.

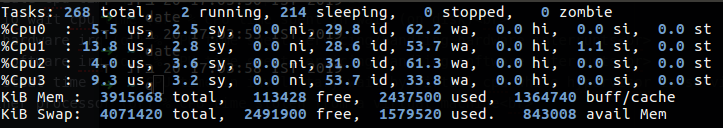

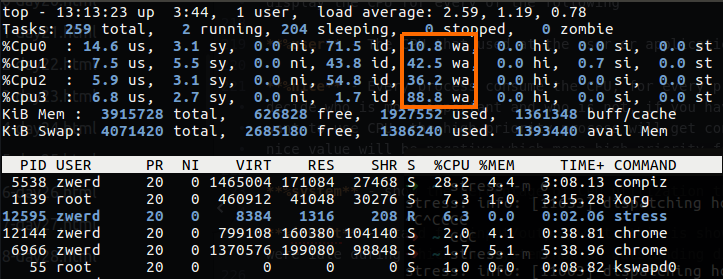

If I will load my hard drive which mean in our case to use stress --hdd 1 command, this can change the value of i/o wait time, on the top you can find it under wa field.

Figure 12 Wait time are loaded.

Figure 12 Wait time are loaded.

Again, this case mean that we have process that use the hard drive and there is a program that on wait state that in sleep mode that we can’t kill.

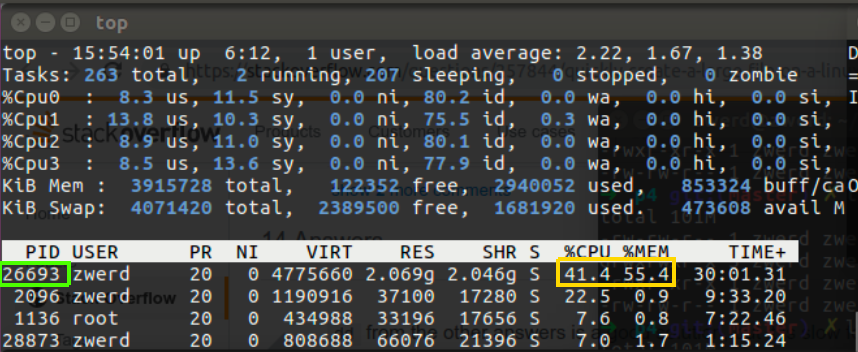

Please nore: this top command bring us more details that can be handy, as example we can know every command, which is more likely will be service name or process name, what is the CPU and memory that this program will use, also we know the username who run that service and also the PID for every process, if some process is over load we can kill it by typing kill -9 <PID number>.

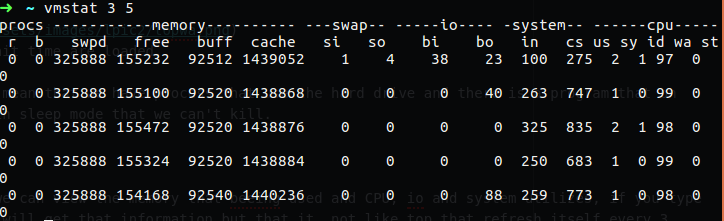

vmstat

In that command we can view the memory that being used and CPU values, i/o and system utilizes, if you type this command you will get that information but that’s it, not like top that refresh itself every 3 second by default, but you can run it with refresh like, by using delay and count option. In the delay you specify how long to wait between every time it display you the information, the count is how many time it will repeat it self, in that case you can see the changes along the way.

1

vmstat 3 5

In that case this command display report every 3 seconds and repeat that five times, as you can see on the output I have, the free memory change every 3 second and so in the cpu and i/o.

Figure 12 vmstat, 3 seconds delay, 5 count.

Figure 12 vmstat, 3 seconds delay, 5 count.

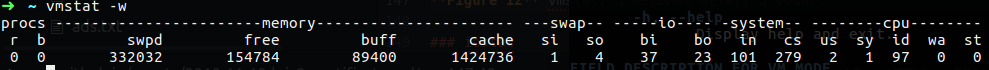

You can view also only the disk i/o that display the read and write statistics by using -d option or memory statistics by using -s option. The following is the way to view the table in wide mode, it’s display more readable table which can help understand more.

1

vmstat -w

You can combain the option as follow:

1

vmstat -w 3 5

I have being seeing people that use that command in their program to track the operation of the memory and CPU, in the case of issue with the resources we have, you can find more useful commands than that.

In the man page I found the following information that related to the field in that output:

Procs

r: The number of processes waiting for run time.

b: The number of processes in uninterruptible sleep.

Memory

swpd: the amount of virtual memory used.

free: the amount of idle memory.

buff: the amount of memory used as buffers.

cache: the amount of memory used as cache.

inact: the amount of inactive memory. (-a option)

active: the amount of active memory. (-a option)

Swap

si: Amount of memory swapped in from disk (/s).

so: Amount of memory swapped to disk (/s).

IO

bi: Blocks received from a block device (blocks/s).

bo: Blocks sent to a block device (blocks/s).

System

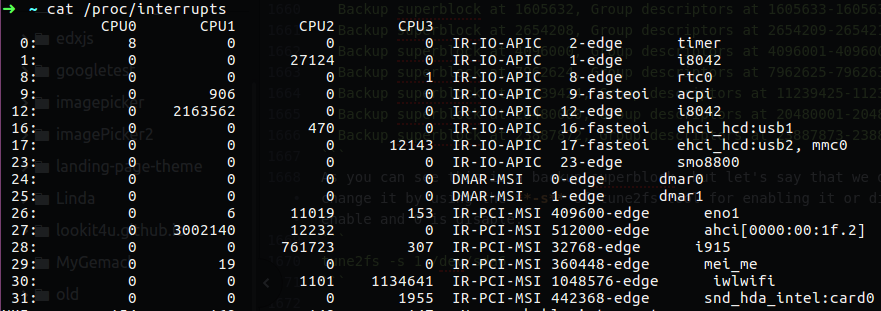

in: The number of interrupts per second, including the clock.

cs: The number of context switches per second.

CPU

These are percentages of total CPU time.

us: Time spent running non-kernel code. (user time, including nice time)

sy: Time spent running kernel code. (system time)

id: Time spent idle. Prior to Linux 2.5.41, this includes IO-wait time.

wa: Time spent waiting for IO. Prior to Linux 2.5.41, included in idle.

st: Time stolen from a virtual machine. Prior to Linux 2.6.11, unknown.

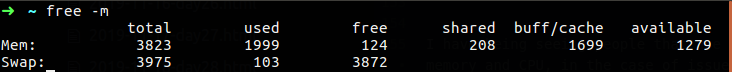

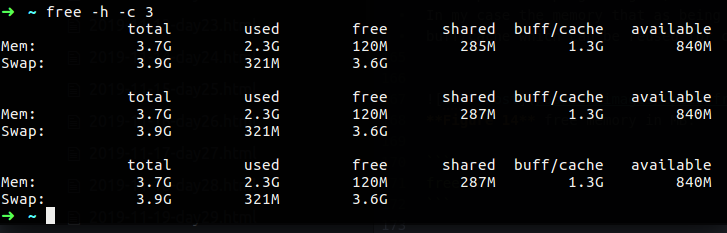

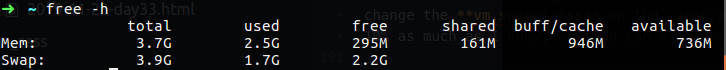

free

In that command you can more easily understand your free memory that using mvstat in my opinion, because it allow you to view that memory by using megabyte instead of kilobyte that requires you to calculate these values.

1

free -m

In my case I have 3823 MB memory in my computer, the memory that are used is 1999 MB and free memory is 124 MB, the shared memory in my case are 208MB, this is mean that there is a two or more process that can access that common memory, the buff/cache are the memory that in the buffer or cache, the consept of that is that if you used some program and close it, that memory going to cache, if you will open that program again, the memory are cached in, so it can bring up the program more quickly. However, if you need this memory, it will allow you to use the memory and get of the cache. In my case the memory that as being cached are 1699MB, available does include free column + a part of buff/cache which can be reused for current needs immediately.

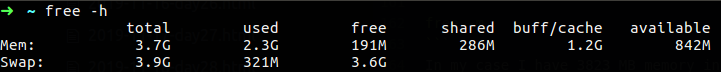

1

free -h

You can also use the -h option which stand for human, it can help you more clearly to view the amount of memory that are used or free.

You can also use count which can help display the output several times like in the vmstat, but we haven’t delay option, the delay will be 1 second.

The swap memory shouldn’t been used, because as we saw earlier that memory should be available only for case we have no memory to use in our system. In my case the SWAP are use more then 300MB, which is not so good, because as I said that need to be normally in value of 0. In Ubuntu the default value of the swap that can being used although we have free space in the memory is 60% of the swap in total, we can view our swap value in by using the command cat /proc/sys/vm/swappiness, in order to change that value we can use the following command:

1

sudo sysctl vm.swappiness=10

Please note that in the case of sysctl, the changing of swap is temporary value, which mean after reboot the value will go back to the last value, to make sure this value be permanent we need to change the vm.swappiness on the /etc/sysctl.conf file. The recommended value for the swap is 10% as much as I know, but you can experiment it.

I use the following command for look on my memory changing:

1

stress -m 6

In that case you can see that swap are really working hard and the cache was release a bit, this is mean that my system going buggy behavior, and now we need to find what causes this issue.

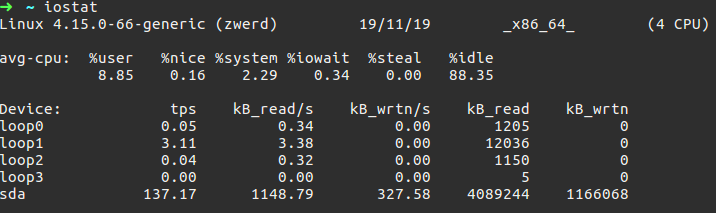

iostat

According to man page, the iostat report Central Processing Unit (CPU) statistics and input/output statistics for devices and partitions. The iostat is a part of the sysstat package which mean that this packets contain several other tools and one of them is iostat, in my case I use Ubuntu, but if you are using some other operation system that doesn’t have that tool, just install it on you distribution, if it’s Debian like, you can use apt-get, if it is Fedora like, you can use yam, in my case to install the sysstat package I run the following:

1

sudo apt install sysstat

By running the iostat we can find information about our i/o on our system.

The ouput contain the linux kernel version, which is Linux 4.15.0-66-generic and my PC name which is zwerd. You can also see the date (although for just date we use date command). we also can see the type of our operation system which is 64 bit in my case, and that I have 4 CPU available. avr-cpu display the CPU for every of the following

%user - The CPU that used at the user or application level.

%nice - Every process consume the CPU, for every process there is a priority that can be use to decide who is more important and who is not, if you have up to 10 process on the backgound and they want to use CPU, the high-priority process will get consume the CPU before other, in that case the nice value will be negative which mean high-priority for that process.

%system - show the percentage of CPU utilization the been use by the kernel.

%iowait - stand for input/output wait, this is show the percentage of time that the CPU or CPUs were idle during which the system had an outstanding disk I/O request.

%steal - Show the percentage of time spent in involuntary wait by the virtual CPU or CPUs while the hypervisor was servicing another virtual processor.

%idle - Show the percentage of time that the CPU or CPUs were idle and the system did not have an outstanding disk I/O request.

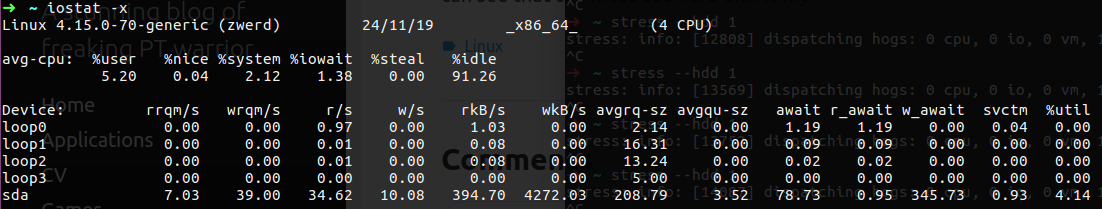

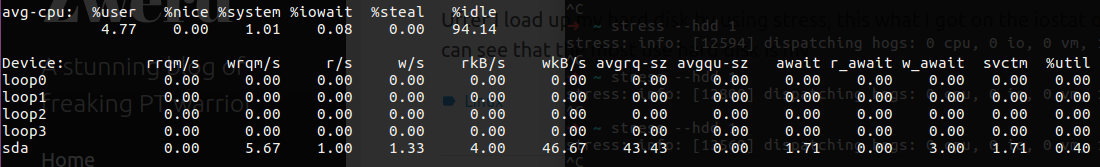

Let’s load up my system to see the changes on our output, I use the command stress --hdd 1, if we use top command we will see the wa load up, which mean that we have many process that in wait time state and they wait for CPU that can process them.

Figure 19 I/O wa by using top command.

Figure 19 I/O wa by using top command.

We can use -x for extended information that include utilization as well, we also can print out the output as that same way we done on vmstat, it can display output multiple time and delay from each as follow:

1

iostat -x 3 5

In that case it will print out every 3 second, 5 times.

Figure 20 iostat with extended.

Figure 20 iostat with extended.

After I load up my hard disk by using stress, this what I got on the iostat output, you can see that the values on the output load a lot.

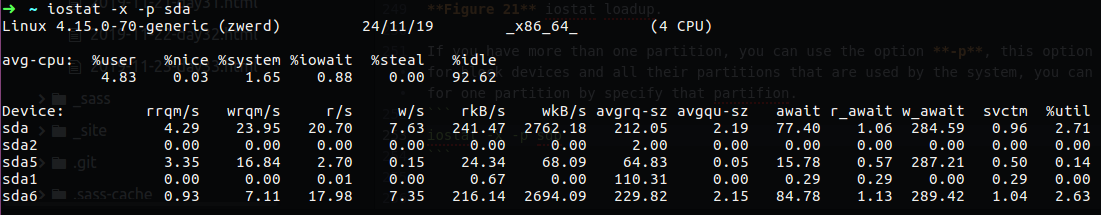

If you have more than one partition, you can use the option -p, this option displays statistics for block devices and all their partitions that are used by the system, you can also display it only for one partition by specify that partifion.

1

iostat -x -p sdb

Figure 22 iostat for specific partition.

Figure 22 iostat for specific partition.

sar

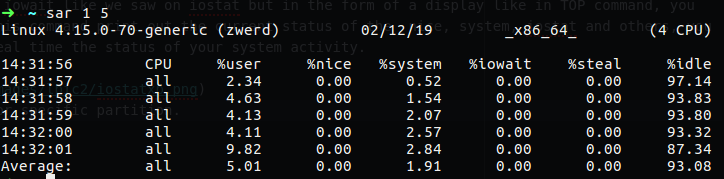

If you want to see the iowait like we saw on iostat but in the form of a display like in TOP command, you can use sar command. This command print out the current status of the nice, system, iostat and others, you can use it to see on real time the status of your system activity.

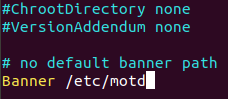

In my case I use sar to display state every second for 5 times, as I already write above, sar is part of sysstat, so if you have some issue to run it, you need to check that it’s enable on the file /etc/default/sysstat:

1

2

3

4

5

6

7

8

9

#!/bin/bash

# Default settings for /etc/init.d/sysstat, /etc/cron.d/sysstat

# and /etc/cron.daily/sysstat files

#

# Should sadc collect system activity informations? Valid values

# are "true" and "false". Please do not put other values, they

# will be overwritten by debconf!

ENABLED="true"

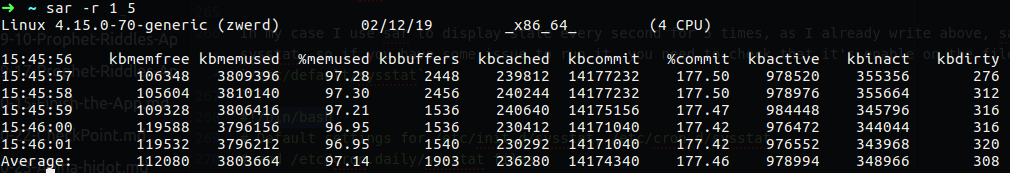

You can run the command sar -r 1 5, the -r option stand for report memory utilization statistics, on the output you can find the KBCOMMIT and %COMMIT which are the overall memory used including RAM.

You can also run sar -S which will give us information about the SWAP free and used memory.

Please note: sar is actually collect, report, or save system activity information and it can be any information activity regarding our system, as example we can use sar -b which will bring us report I/O and transfer rate statistics, one of them is the tps which is the total number of transfers per second that were issued to physical devices.

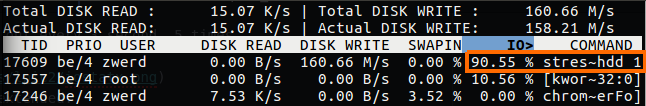

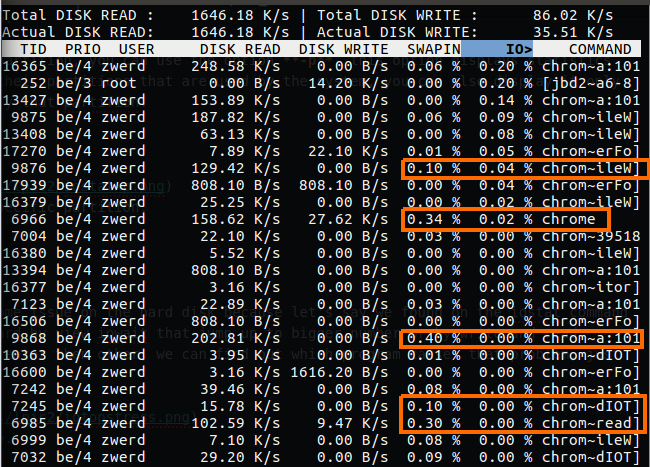

iotop

If we found that we have some issue on the hard disk because let’s say we found on the iostat command that the i/o work really hard by view iowait that jump up to bigger number or by write and read values from the disk are greater then other, we can find out which program causes that problem, just type iotop.

In my case you can see that it tell us that the most utilizes program in the IO of my hard disk is stress, you also can see that it specify the hdd by side of that, which tell us what option has being used in stress command.

In the iotop you can also see the swapin value, and in my case as you saw earlier, my swap are really utilizes by some program, so I checked it out and find what is the program that used my swap.

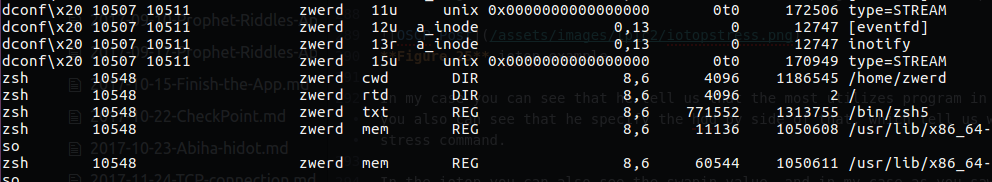

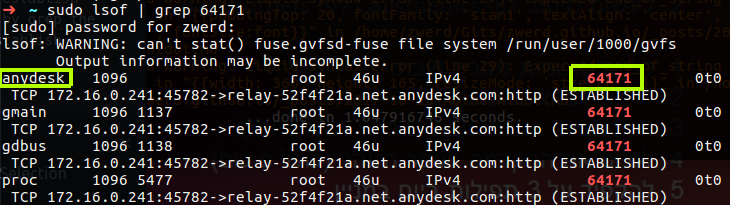

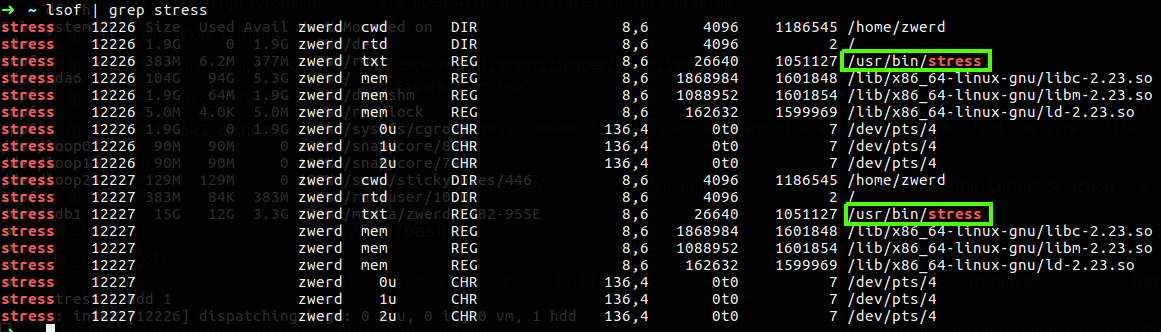

lsof

This command list open files on your system, which mean you will see all files that are use under your username, so if you run that command it is not very useful, but I tell you what, if you feel like you have some memory issue of hard disk working hard and you check and find what program are running, you can run lsof and grep the program you suspect that make you the issue, and then you can see exactly what files are open with that related to this program.

Let’s run stress command for utilizes the hard disk and see if we can find the stress files.

Figure 27 lsof stress files that are in use.

Figure 27 lsof stress files that are in use.

As you can see the binary file are in use, if you see that your computer are leak of speed because of some program and you want to check what files are used by that program, the lsof can be the solution for you.

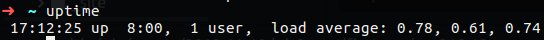

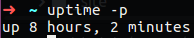

uptime

This tool can tell you the how long the system has been running, also you can find out how much users connect to this PC and what is the load average, the load average first number represent the load time average for 1 minute, the second is for 5 minutes and the last one is for 15 minutes.

You can view the exec time in nice mode that can be display.

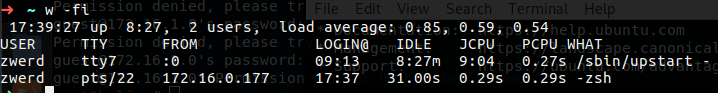

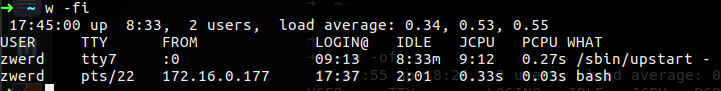

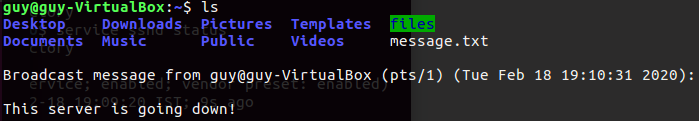

w

This command show who is logged on and what they are doing, you also can use the who command, but this command can be handy in the network cases, let’s say that some one connect to your computer or server remotly, you can use this w command for checking out what is the source ip of that user.

1

w -fi

The -f option stand for from filed that will be specify on the output, the -i display on that line the ip address rather the

You can see that I have two connection that one of them have IP address, I made this connection from other PC to my local computer, the first line specified my local user. You also can see that the remote user use zsh program remotely, if I change it to bash on my other PC I will see that on the w output

Figure 31 Using bash instead of zsh.

Figure 31 Using bash instead of zsh.

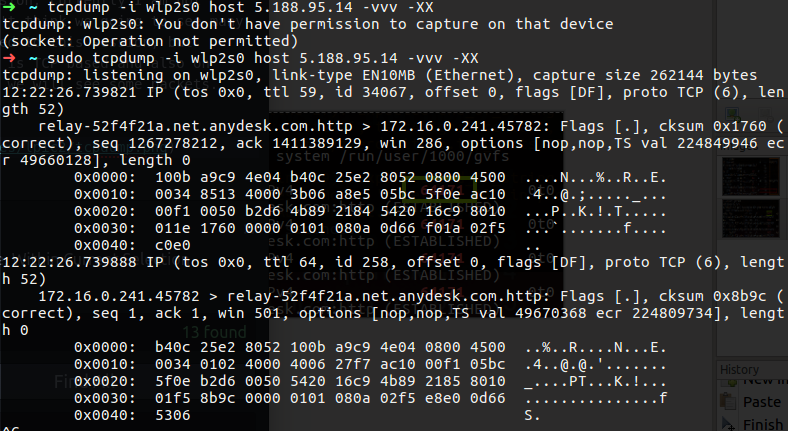

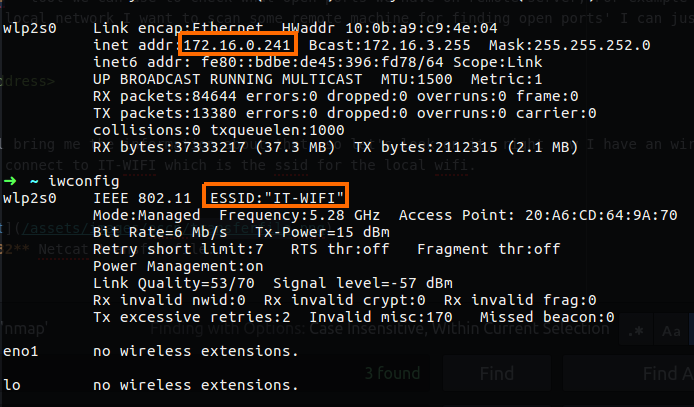

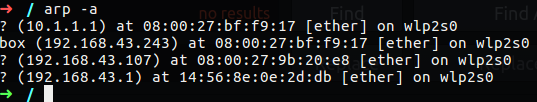

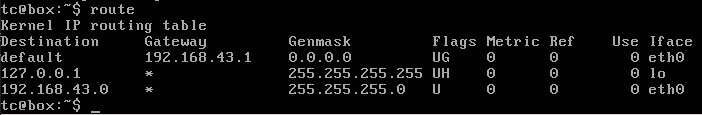

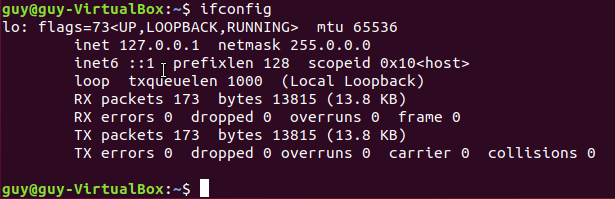

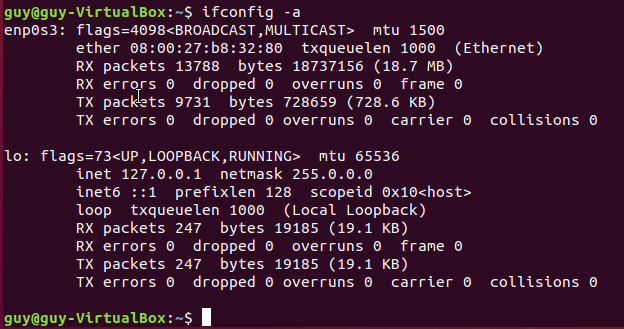

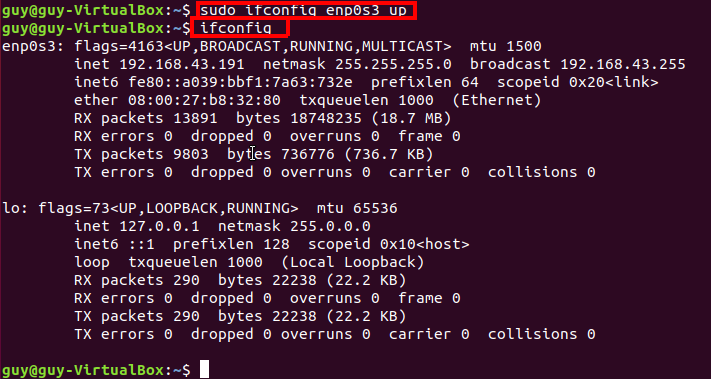

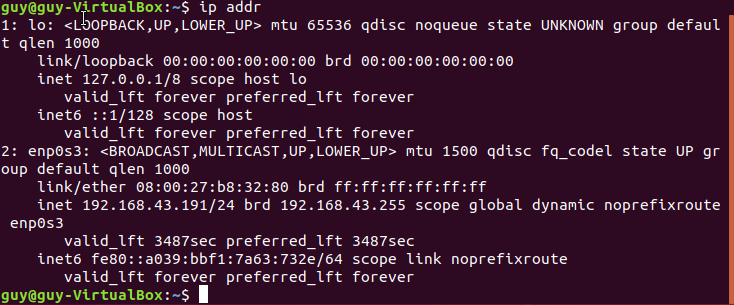

netstat

This tool can help us to find out on the network level processes that using the network, and many connection that are open between your computer and the local network. But first of all it is important to know how we can look at the network level using netstat.

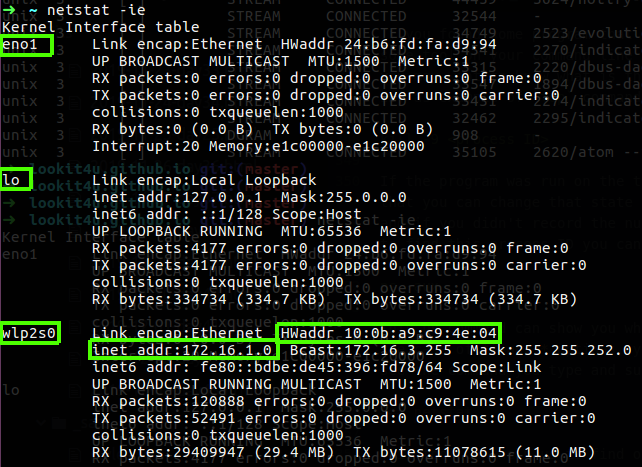

1

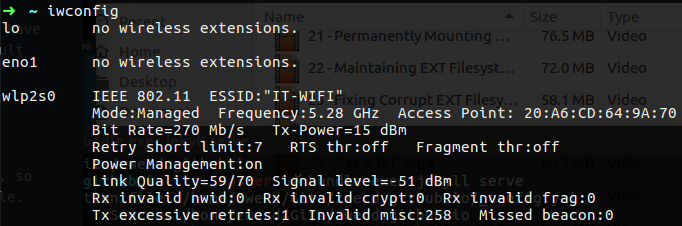

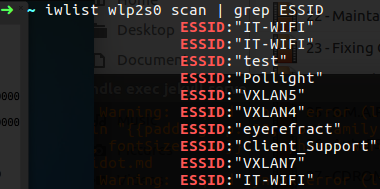

netstat -ie

This command will show us our local network interface with his IP address and MAC address which is the physical address of that interface.

Figure 32 My interfaces network.

Figure 32 My interfaces network.

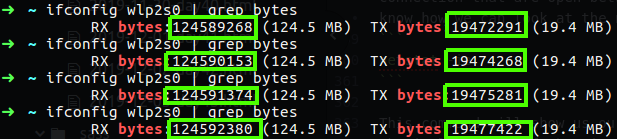

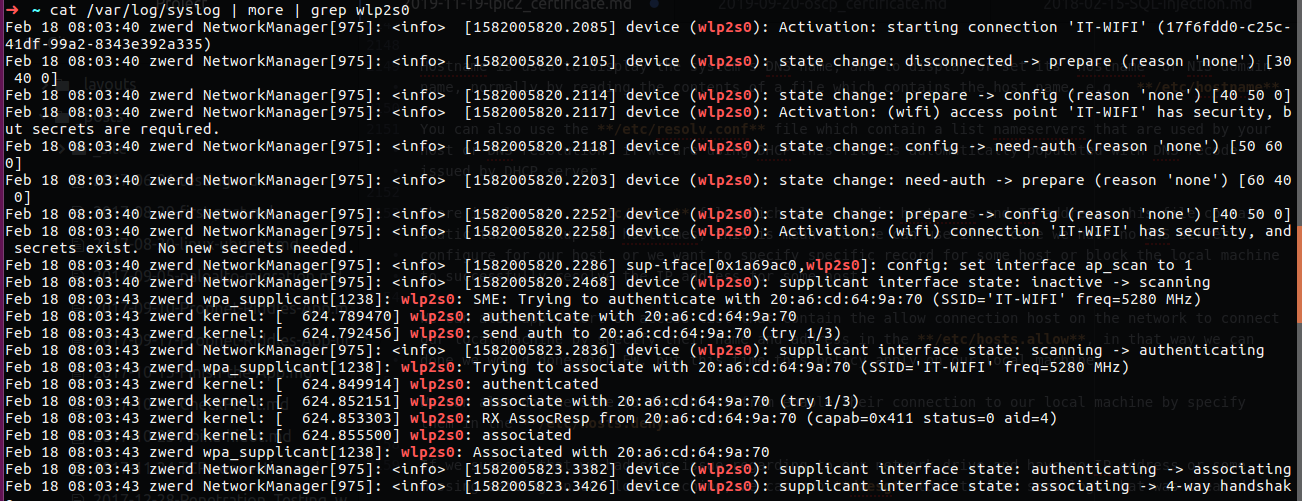

It is the same as ifconfig, you can also see the TX and RX which stand for transfer and receive of the bytes over that interface, the eno1 is my physical interface while my lo is the loopback, the interface I used right now is wlp2s0 which is my WIFI interface, you can see that the TX and RX on that interface are pretty high, you can grep that by using the “bytes”

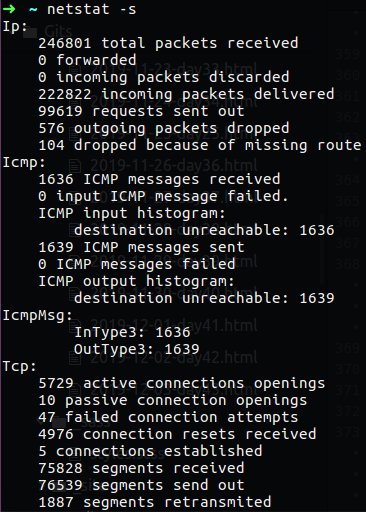

We also can use netstat to get the statistics of our network interfaces, in that case we will see the count of IP pakets that go though our network interface, we can see the icmp packets that are used for PING traffic and also there is the TCP and UDP packets the go through out interfaces. If we see an error in one of the statistics under specific protocol/field we will know that something on our network are stoping us to get that streem of packets.

1

netstat -s

Figure 33 Statistics by using netstat.

Figure 33 Statistics by using netstat.

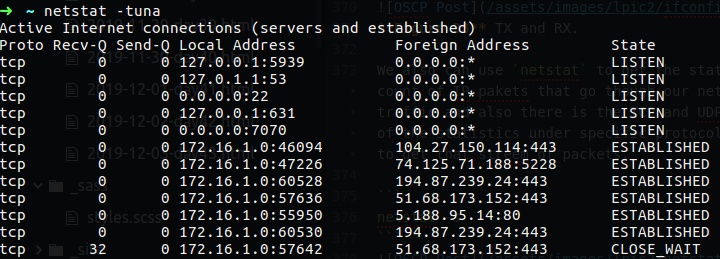

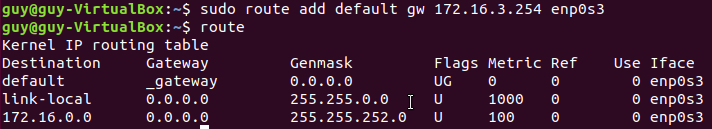

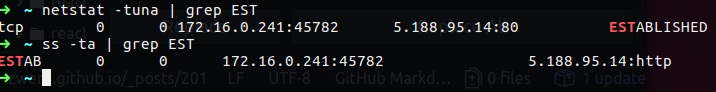

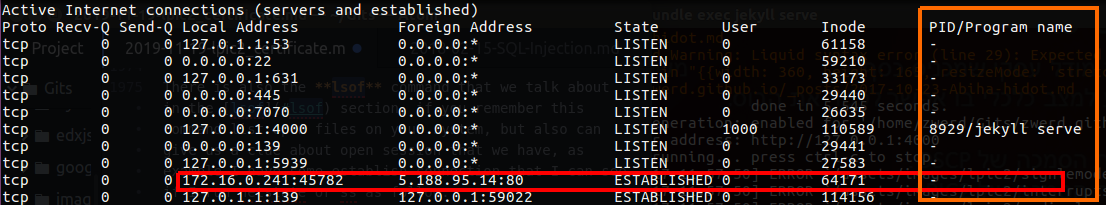

You can also use netstat -tuna, the -t stand for TCP connection only while -u stand for udp, the -n will show the numericla address instead of trying to find the hostname, -a stand for show both listening and non-listening sockets, so by running this command we can see the connection that are running over the network and their address

Figure 34 Statistics by using netstat.

Figure 34 Statistics by using netstat.

The command I love to use is netstat -nepa that can show you the program who use the connection and their process ID number which can be handy to find some process that use the connection and stop it by that ID number.

Figure 35 Process ID of networking connection.

Figure 35 Process ID of networking connection.

TIP

If you found some program that make some issues on your commputer, let’s say that you run some script or some tool on your command line or you have some program on the GUI and you want to kill it, in that case you may want to know what is the ID number of that process, after you find out what is the process number ID, as example of top command, you can kill it by using the following:

1

kill -9 <process ID>

If the program was run on the terminal and you type ctrl+z that it is on suspended state, this is mean that you can change that state and load it again or just kill it. For killing it you need the proccess ID, and if you didn’t record the number ID you can’t find that process on the top because is on suspended, so to find this process ID you can type the follow:

1

ps -aux

This ps command can show you what is the process ID that are suspended and after that you can kill it. in the case of -aux options, you will see all the process that run under your user, you also be able to see the command you type and suspended under the COMMAND column.

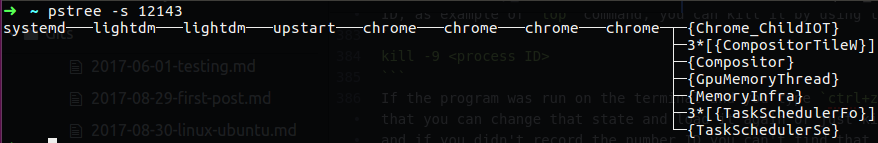

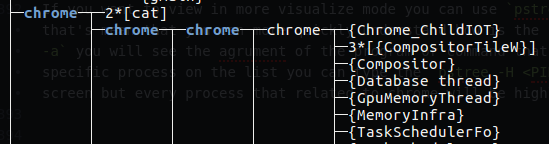

If you want to view in more visualize mode you can use pstree, this command display tree of process, that’s mean that you can more quickly understand how is the parent and child of process. if you use pstree -a you will see the agrument of the program or command that are run on your system. If you want to see specific process on the list you can type the pstree -H <PID>, in that way the all tree print out on the screen but every process that related to chrome will be highlighted.

Figure 35 Chrome process ID using pstree.

Figure 35 Chrome process ID using pstree.

If you wnat to see just that process without all the tree we can use pstree -s <PID>.

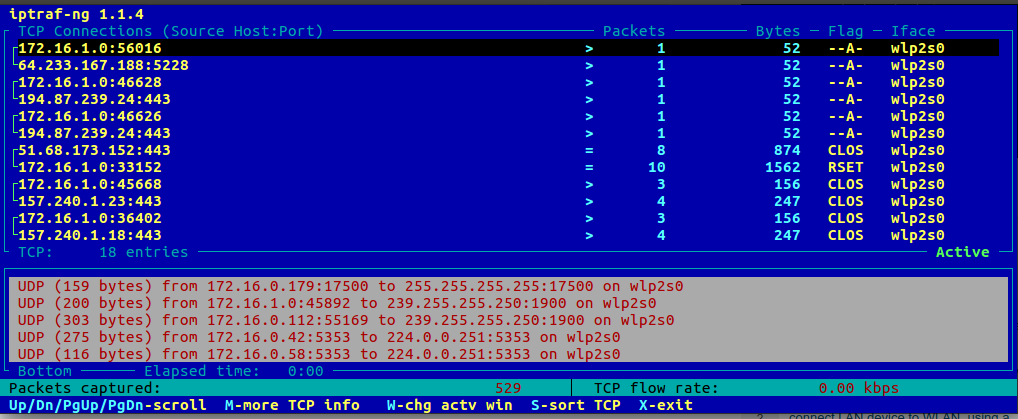

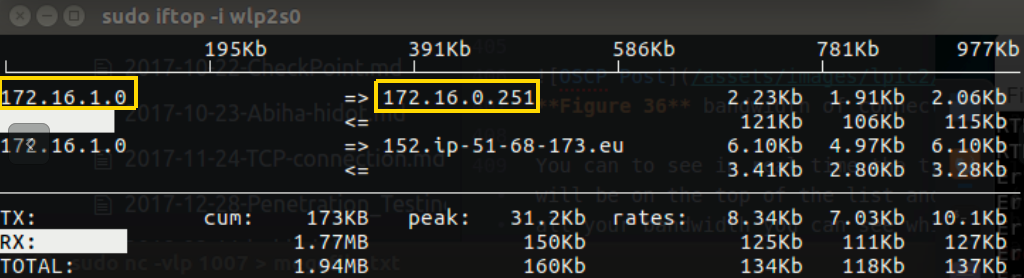

In the network cases process you can also use very handy command that can bring you more relevant information, as example let’s say that we want to see the bandwidth and the statistics of the utilization of that bandwidth with connection information like source and destination address, well for that case we can use iftop command that can bring us information in real time about the traffic that go through out network interface, you can see by using that command the amount of bandwidth that are utilize by which host that have connection to our local computer.

Figure 36 bandwidth of connection in real time.

Figure 36 bandwidth of connection in real time.

You can to see in real time the traffic that I have on my wlp2s0 interface. The bigest connection trafic will be on the top of the list and that can give you a clue if you have some program that take advantage of all your bandwidth you can see which is the destination address and go back to the netstat to check what is the process that run this connection.

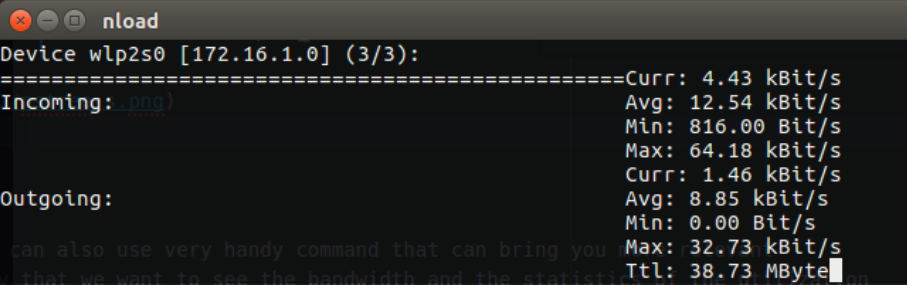

Another handy tool you can use is nload, this tool are monitor your bandwidth load, which mean you can see if you have a lot of traffic going on the incoming side or outgoing of you interface, in my case I connect to Mint Linux site to download some iso file that you can see my graph changing on the nload command.

Figure 37 Using nload and iftop to see the download traffic.

Figure 37 Using nload and iftop to see the download traffic.

The graph load up and on the iftop we can see the address that have a connection to my computer which I download the iso file from it.

You can also use iptraf or iptraf-ng these tools allow you to see the connectiviry of TCP connection that came to your computer.

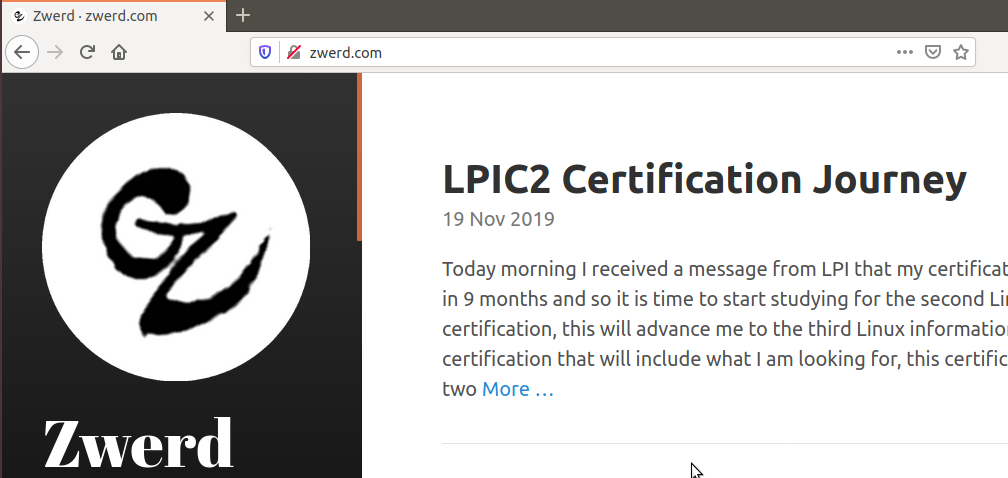

Network Monitoring

Let’s say you work at the IT position on some organization and you got some call from the support team that tell you they have some client that his computer work slowly in every action over the network or internet, in that case you will need to check and troubleshoot that issue, you need to find out if the slowness appear when the client side over the internet or on his local network, to do so we have many tools that can help us in these cases.

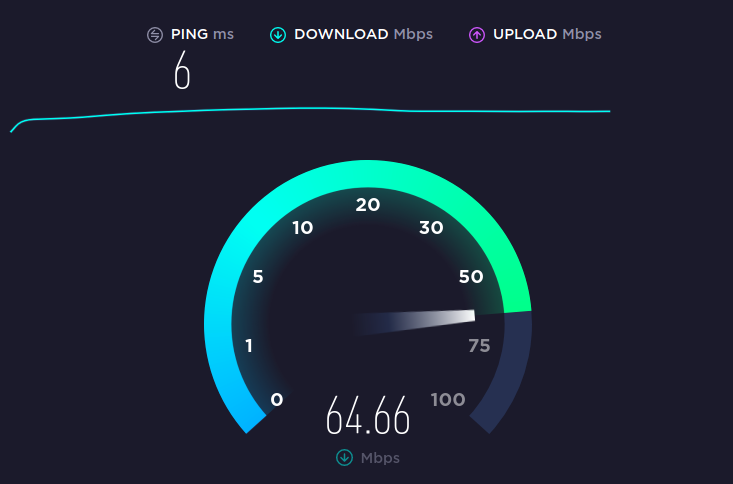

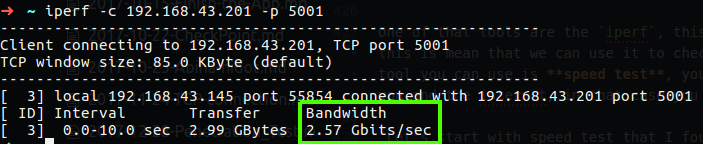

One of that tools are the iperf, this command can check the bandwidth between two point over the network, this is mean that we can use it to check if the local network have the slowness issue or not, the other tool you can use is speed test, you can find one on online website that can check the bandwidth between you and the internet, in that case you will be able to see if the slowness are appear over the internet.

Let’s start with speed test that I found speedtest.net, you just need to click the button and this site will give you the details.

In the case of iperf we have two mode, server mode and client mode, it dosn’t matter were you run each mode, what is matter is what is the bandwidth between them, on the client side we need to run iperf -c <server address>, on the server side we just need the command iperf -s, after we run it the detail about the bandwidth between the server and client will reveal.

You can see that in my case the bandwidth from my computer to another in my lab is 2.57GB which is good for me, in the case of our example that you have client that complain about slow network, you may have to check several thing, the first one is to check his and other computer connectivity out of the local network with speed test, after that you will go to the second check which is bandwidth test between two local computer. If on the first test using the speed test, let’s say you found that the client computer work slow by speed test and other computer aren’t, then you run the second test which is the iperf and found low bandwidth connectivity between the two, in that case it’s mean that we have local network problem that can be found on the local network interface or the nearly network device like switch or router.

On the LPI site under 200.2 object you can found that they want the student will have awareness of monitoring solutions such as Icinga2, Nagios, collectd, MRTG and Cacti, well these programs can be install on you computer or other server and you can monitor the resource you have, but the Cacti is monitor program for network connectivity and so the collectd, so I don’t really understand way they want to have a knowledge of these program, in the day day life I don’t think I am going to use such program to monitor my network resource, but in the case of these program, all of them work in the browser, this is mean that you have some URL and you can view the bandwidth or interfaces status etc.

Please note: after all we see here I want to specify something that I solve on many situation I had in the companies I worked for. Let’s say that you have some web server that have some content of data for many users, so far you after you read all of that chapter you have the knowledge to use Linux tool for checking the performance, memory used, network bandwidth and etc. If for some reason you see that the RAM used was increase every months and in percentage that grow up, in that case you may consider to upgrade the hardware for that server like adding more RAM memory or install more web application server and distribute the load between them, so in such case we will check carefully what is going on our server in the hardware used, we can monitor that and track down the performance with the tools I specify above.

Challenge 200-1

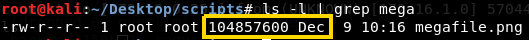

- create some large file that contain more that 100MB.

- Transfer that file from other computer in your lab by using

nccommand. - Check and view the transformation and bandwidth utilization.

- Check the CPU and RAM used during the transformation and find the PID.

This is simple challenge but it can help us to rule the commands we learn so far, I know that there is a new command in that challenge like nc, but it you going to linux field you need to have a clue how to solve issue and problems alone by searching the solution and be comfortable with new commands on cli.

1. Create file of 100MB

To create large file I used the fallocate, this command can create for you file in any size you will need, in my case it was very useful.

1

fallocate -l 100M megafile.pno

This is not really matter what extension for that file you will create, what is matter is that file are in the size we want which is 100 MegaByte.

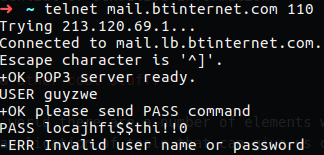

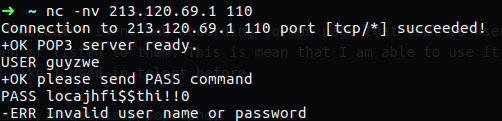

2. Transfer the file you have to other computer in the LAB.

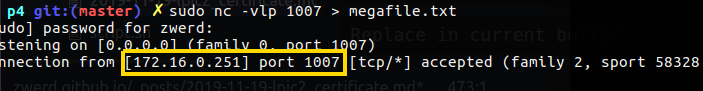

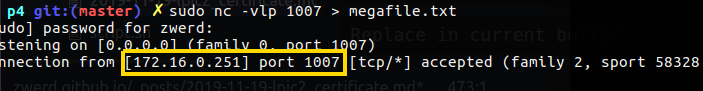

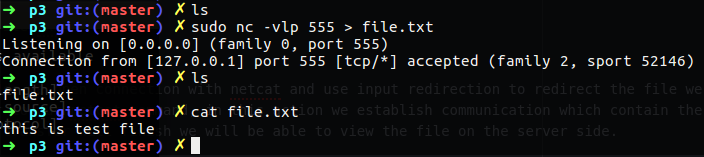

I created that file on my Kali linux so I tryied to transfer it back to my Ubuntu, on my Ubuntu I set up the netcat to play as server with the following command

1

nc -vlp 1007 > megabyte.png

On the client which going to be my Kali linux, I run the following command:

1

nc -v 172.16.1.0 1007 < megabyte.png

Please note that in my segment the subnet contain more than 254 address which is classless of 23 as prefix, also note the redirection, on the client side I redirect the megabyte.txt file to the connection that I am going to have with netcat, on the server side I redirect the output from netcat to the megabyte.txt which will going to be the same as on my Kali.

Figure 42 Netcat on my Ubuntu, the address is of my Kali.

Figure 42 Netcat on my Ubuntu, the address is of my Kali.

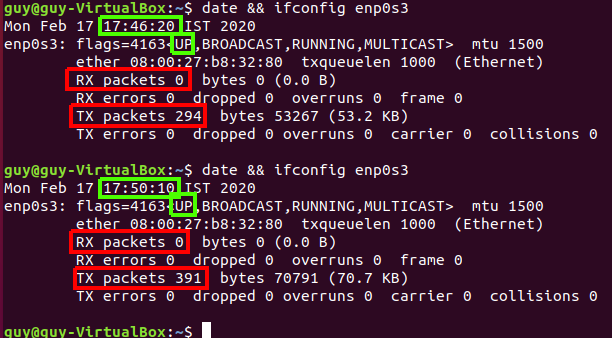

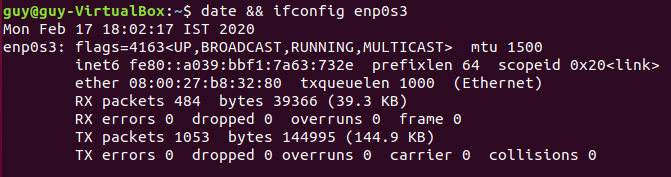

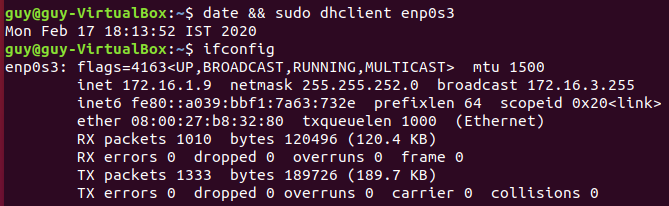

3. Check bandwidth and utilization.

Wile the file are transfer I run on my Ubuntu the command we saw earlier to view the utilization of the bandwidth on the network interface, the first one was nload command that will give us the information in a half of second what go through in your interface.

I can see that there is a tranformation or more likely data that go through my interface network, but I can see the connection it self, that go in and out through my interface, in that case I needed to use iftop which can tell us each connection what is the source address and the destination.

You can see the address 172.16.1.0 which is my computer, the Kali has the 172.16.0.251 address, so now we know that there is connection between us and other machine and we know that data are transfer in this connection.

4. Find the PID of the utilize program.

Now let’s say that we want to check the state of our system, like checking the CPU and RAM that used, I run the top command and found some process that showed up on the top of my list, so this is the process that I want to look at it.

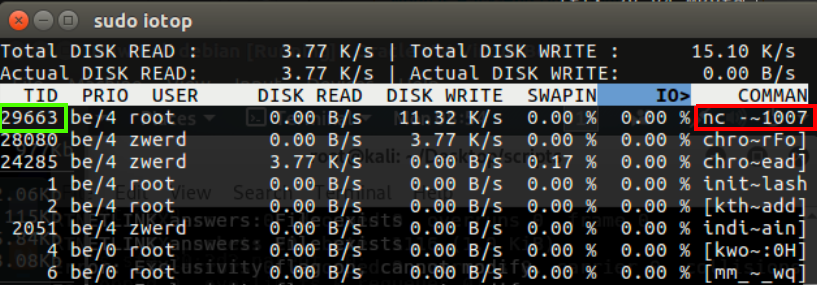

You can see that I have the PID, so now we want to find and check the state of disk IO, because remember, if there is utilize of the CPU and RUN, something like that can be a program that run on our disk, in my case I know that some program are running on my Ubuntu, so I run iotop command which can tell me what was done on the disk.

Now you can see by filter the PID we found earlier and found the program that run on our PC, in my case it’s nc program and it’s also showed me part the nc command which contain the port 1007.

Summery: it is important to know how to read and found information of some program in our linux operation system to deal with utilization issues or network problem, the PID can be your best friend to address the issue out but it’s important to know that if we decided to kill process, this action may not be the best solution for that issue, because maybe that problem will appear back again tomorrow, the best solution can be found on the dip level of that issue, we just need to understand why that issue appear and for what it depends, after we found that we may have the ultimate solution that can migrate that issue for best.

Chapter 1

Topic 201: Linux Kernel

When we talk about linux kernel we want to be able to find out what is our linux kernel version and how to read that version, which mean how we know if that version is stable or be familiar with more details that this version number contain.

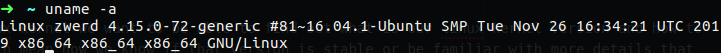

First of all let’s check our version number, we can done that by using the uname -a command, this command will print out the version number and the name of our machine also time and date and even what is our computer architecture, which is 64bit in my case.

Figure 47 My linux kernel core.

Figure 47 My linux kernel core.

The first number is the version number, in my case it is kernel version 4, the next number is the major revision which in my case is 15 and the third number is the minor revision number, the fourth number is the patch level.

In the past, in the version of the kernel was a general rule in the number version, in the second number every odd number was as developed version, and the even number was as stable version, as example the 1.5.2 kernel was under development and version 1.6.2 was known as stable kernel version, when the kernel version 2.6.x came along it stay for long time without numerate the number except the last one number, because version 2.6.x was so awesome! so the third number and even the fourth number which stand for the patch number was the only numbers that numerate long the way.

One more thing that you need to know is that after they release the version 2.6, they get ride of the odd/even number and every new release is stable and not under development, every stable version will develop and update on a new version.

When the version of that kernel raise up and was 2.6.39.4 than Linus Torvalds decided update the enumerating to be more like the old one, which is the first number will be major release, the second will be minor release and the third will be the minor revision which is stable or patch to update abilities on the kernel. You may sometime see like a fourth number which play as a patch, in my case of 72-generic this is the Ubuntu specific patch they done.

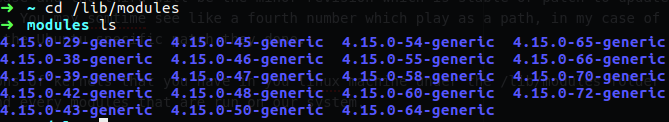

You can find the versions of kernels that you have in your Linux machine under the /lib/modules folder and in each one we can found every modules that are run on our system.

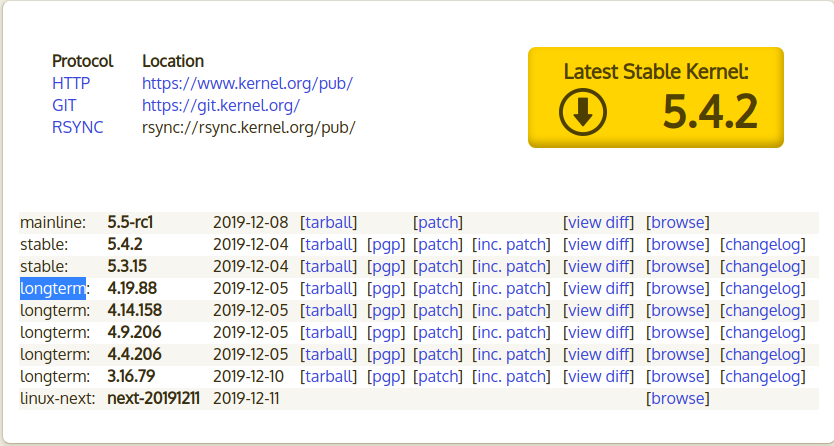

We can go to the kernel archive and found there the kernel that are stable and under operation release use. The meaning of longterm is that this kernel version will be available for long time because one of the operation system like Ubuntu or centOS or Red Hat may maintain and using this kernel version, this is why you may be seeing some old kernel version in that site.

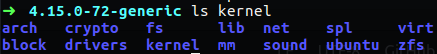

If we going to the kernel folder under the kernels version that I showed up earlier, we will see that every module lies on the most appropriate folder.

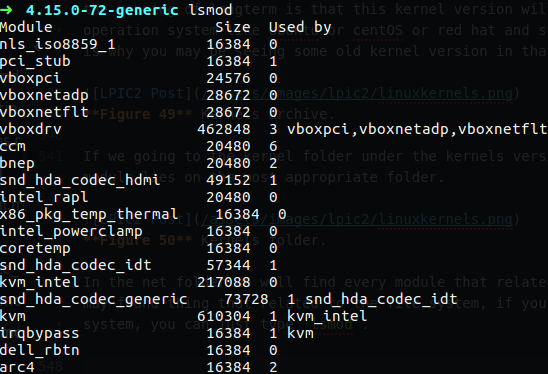

In the net folder we will find every module that related to the network card and such, in the fs folder we may found thing that related to the file system, if you want to see every module that are enable on your system, you can just type lsmod.

Figure 51 lsmod to see the modules.

Figure 51 lsmod to see the modules.

As you can see the modules that are enable print out on my screen with lsmod, you can see the module name and it’s size, you can also see what modules are depends on which of the modules as example the vboxdrv module is the module that responsible for the vbox on my PC I guess, and there is three modules that relay and depend on that one which are vboxpci, vboxnetadp and vboxnetflt.

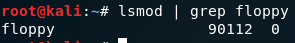

You can remove module by using rmmod you just need to know what is the module name, as example, let’s say that we want the floppy out, so we can grep it by using lsmod.

Figure 52 Find out the floppy by

Figure 52 Find out the floppy by lsmod.

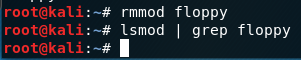

Now, in order to pop it out we need to use rmmod with the name of that module.

Figure 53 Remove the floppy module.

Figure 53 Remove the floppy module.

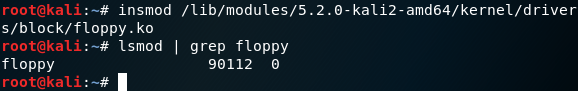

If we want the module back in, we need to use insmod, but for insert back from the dead some lost module we need to let him know the exact path for it. we can use find for finding that module.

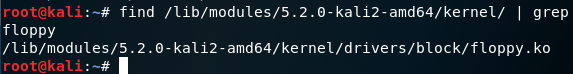

Figure 54 Finding the path for floppy module.

Figure 54 Finding the path for floppy module.

We now can use this path to insert that module back in. You can see that now I can find that floppy module in the active module list of lsmod

Figure 55 Inserting the floppy.

Figure 55 Inserting the floppy.

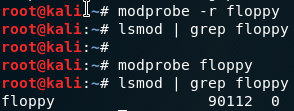

There is another way to remove module from the active list by using modprobe, in this command we can remove module and insert it back without specified the full path.

Figure 56 Modprobe for remove and adding module.

Figure 56 Modprobe for remove and adding module.

The command modprobe know what is the path of every module by using the modules.dep file, this file contain every module information and dependencies, to update this file we can use depmod -a that will go and insert the information of the modules to this file.

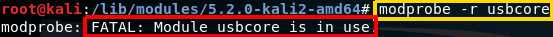

important thing you may want to know is that module that have dependencies can’t be remove out because it in use, in that case we will need to remove the modules that are use this module.

Figure 57 Remove module, error because it in used.

Figure 57 Remove module, error because it in used.

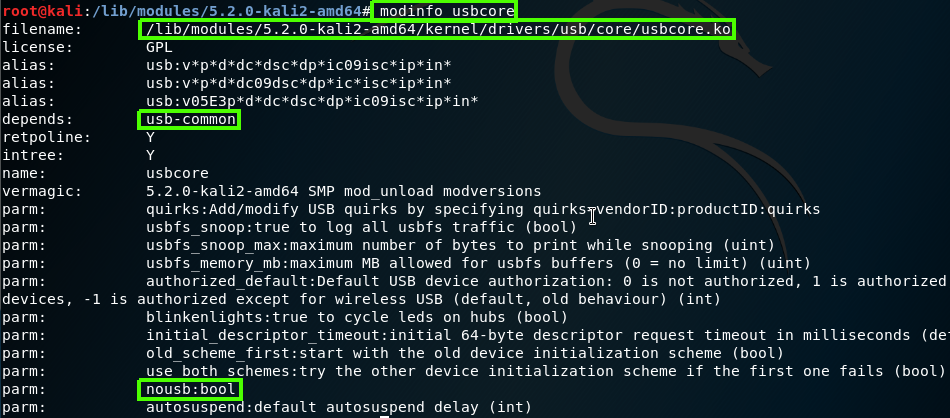

We can get more information about the module by using modeinfo, by using this commend we can found the path for that module, dependencies, version and more parameters that module have, so, in case we want some module use specific parameter, we will remove that module and use modprobe to insert it with the relevant parameter.

Figure 58 Module info by using modinfo.

Figure 58 Module info by using modinfo.

As you can see the usbcore have the param nousb which is boolean, you can insert that module and using that param as example modprobe usbcore nousb=N.

Please note: we also can use modinfo for finding the full path for some module by using the -n option, as example:

1

modinfo -n dummy

This will give us the expect path for that module

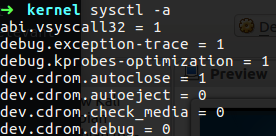

Please remember that all we talk about are the modules and not the kernel option itself, like enable the NAT operation for example. Option like that can be found on /proc/sys/kernel, in this folder you will found every option that are enable on your kernel, if you want to watch the configuration file which from there you enable them, you need to check the sysctl.conf that can found on the /etc folder, in that file you can enable more functionality of the system, like enable ip forwording or such.

You also have the command sysctl which can help you to see add change the option of your operation system

Figure 59 View the option that enable on my PC.

Figure 59 View the option that enable on my PC.

As an example we want to change some value of the running setting:

1

2

sysctl -w vm.vfs_cache_pressure=80

This command will change the vfs_cache_pressure to value of 80, but please note that this change are made on the running kernel, which mean if you go for reboot the default setting will come in place, if you want to make this changes permanent you need to make these changes on the sysctl.conf file.

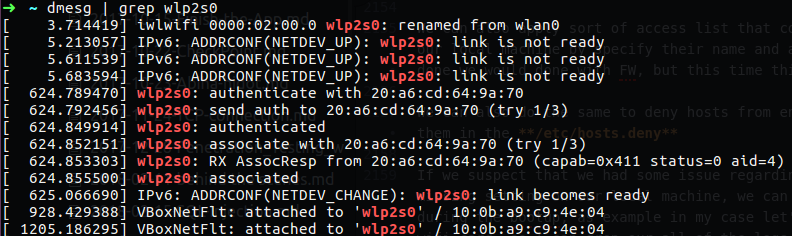

You may ask how the linux kernel knows when some device pluge in and lunch his appropriate module, this is done by the udev, this udev responsible for such a thing so he know to load up the usb module when some USB device pluge in.

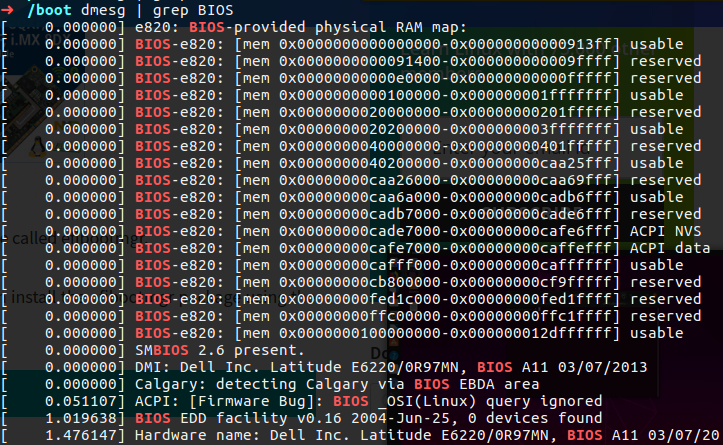

You can see what going on your commputer by using some command that related to udev, such as lsusb which can show us the devices related to usb,lspci that responsible for PCI bridge and we can use lsdev to display information about installed hardware or the dmesg that show us all the log we have from the system like in the boot which we can see on the boot the logs that our system run while bring the OS up.

We also have the udevadm monitor which can bring to the screen logs from the system in real time, you can see on the next gif how it work, I plug in my sundisk device and the udev found it and load it’s logs to my screen, he also showed us the remove log when I remove my device from that computer

Figure 60 UDEV monitor in real time.

Figure 60 UDEV monitor in real time.

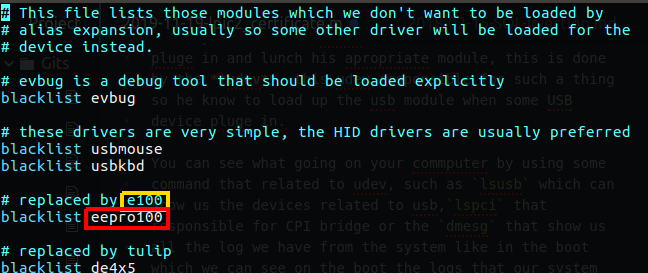

You also need to know that there is a blacklist of modules because let’s say that you plug in some device that have number of driver on you kernel that can support it, but you may want to use just one of them that are the best used for you.

Figure 61 blacklist.conf file.

Figure 61 blacklist.conf file.

In my case you can see that in the blacklist I have the eepro100 module which mean that if Ethernet card plug in, do not use that old driver, so that is the purpose of that blacklist, you can find that file in the following path: /boot/etc/modprobe.d/blacklist.conf.

Please note: if you need to find some file that you don’t know what is path you can always run find / | grep <string> which the string stand for what you search.

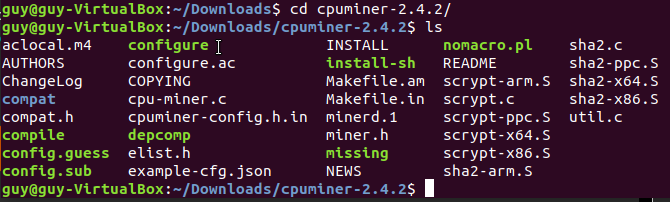

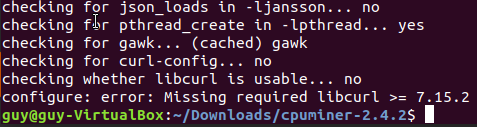

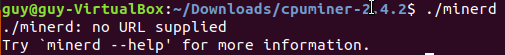

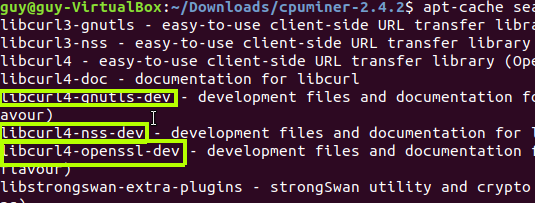

Now let’s say that we want to compile our own kernel so that our kernel will be the latest kernel that can be found in the linux kernel archive.

In the reality, if you asking why you ever customize you own kernel, it can be because you have some old linux system that used for just one purpose like FTP server or somthing like that, in that case you may want to compile kernel without any modules that you know you probably won’t be in use.

So first of all, I will display here the compilation process and installation of new kernel on my kali linux which is virtual machine that I use a lot, you can read more about that in my other PWK post. My current kernel version are 5.2.0 and I am going to compile kernel 5.4.3 which is that latest version in the kernel archive at this writing time.

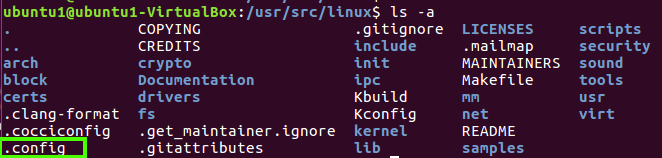

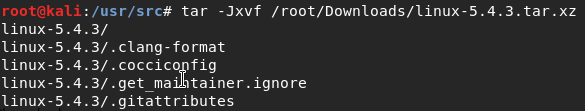

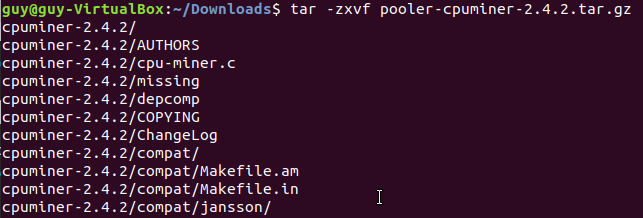

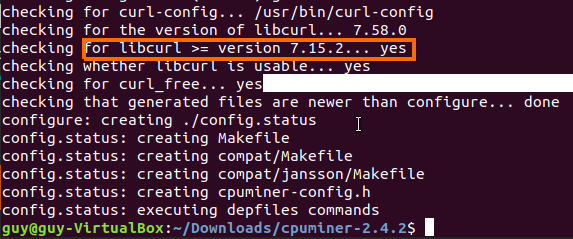

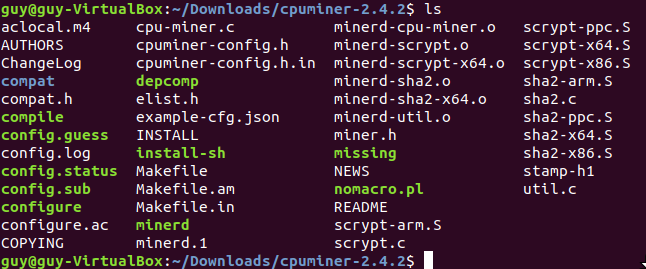

We need to use the /usr/src/ directory as our kernel, so after we download the source code and decompress it we need to create kernel folder or at least make symbol link to the kernel folder named kernel. After we download the kernel file from the kernel archive we can extract it by using the tar command.

1

tar -Jxvf <path to the compress file>

Figure 62 tar the kernel file.

Figure 62 tar the kernel file.

Than I run the following command in order to make the symbolic link to kernel folder.

1

ln -s ./linux-5.4.3 linux

Please note that on the Linux folder that we created we have the document for every module that can be use under our system.

For compiling the kernel we need tools that can help us to do so, in my case I need build-essential which is the Informational list of build-essential packages.

1

apt-get install build-essential

If you want to make kernel on fedora like distro you may need to install “Development Tools”, qt-devel and ncurses-devel.

Now we want to customize the kernel, to do so we going to use make command, this command help us to prepare the kernel and create the configuration that needed, the make command work with target, which mean that we choose some target that tall the make what to do, as example

1

make clear

This make command going to remove most generated files, it also keep the configuration and enough build support to build external modules.

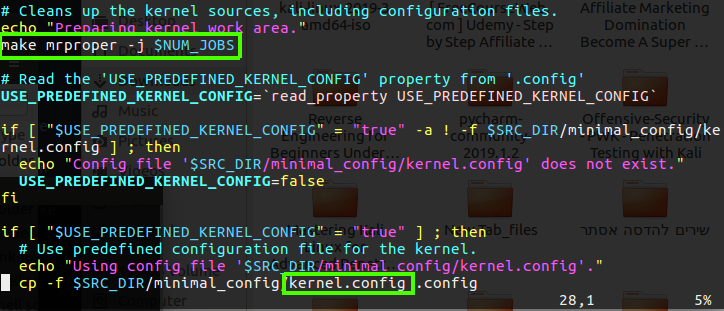

The first thing we going to do is the make mrpreper command which going to remove all generated files + config + various backup files, this will make the kernel as fresh, like let’s say that you make some kernel file but didn’t install it because you has some other thing to do, and after a while you came back to you computer so you can clean up what you did and start over.

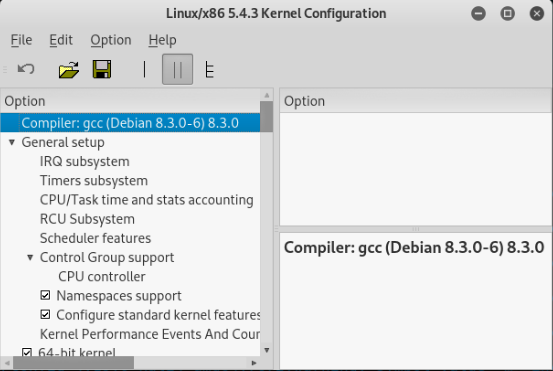

After that we going to configure the kernel, to do so we can use make xconfig, this will bring up configuration window in GUI mode that you can setup what you need in your kernel by using you mouse and mark the setting you want.

I my Kali Linux I had a lot of issue that related to some pkg that was needed, if you have such an error, the log of that error will tell you what pkg is missing and all you have to do is apt get install that pkg.

In my case I mange to run xconfig which bring me the configuration menu in GUI mode up to the screen,

Figure 63 GUI for configuration menu.

Figure 63 GUI for configuration menu.

After that case I decided to run the kernel compiling stuff under other machine, and we will continue to see more in my VM ubuntu.

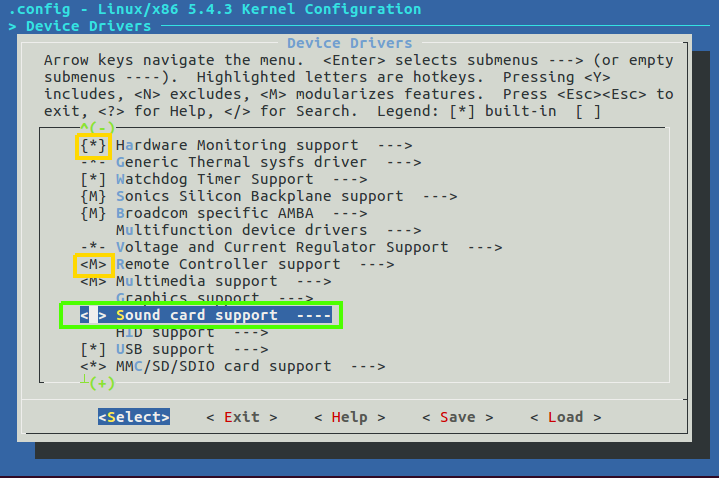

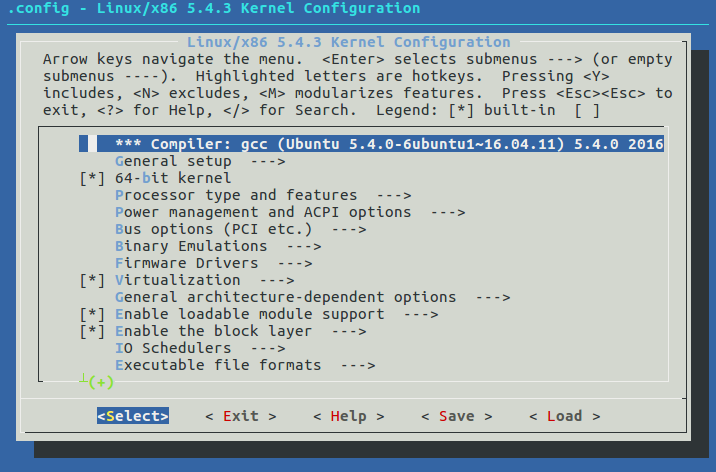

On my Ubuntu I run the make menuconfig, and that bring me the menu for the configuration in my terminal, in this menu we can choose line in the xconfig the setting we want to be on our kernel.

Figure 64 Setup config in terminal mode.

Figure 64 Setup config in terminal mode.

In that menu we have several option for each device driver, the M stand for module, this is mean that this driver can be use as a module if needed but it is not part of the kernel, however the asterisk sign “*“ stand for that module will be a part of the kernel and if so, you won’t be able to disable that module from your system, now, if you leave the chosen feature (module) empty, this will mean that this module won’t be available, this is mean that we won’t be able to load it to the kernel even as module.

You can see that the Hardware Monitoring support mark with asterisk which mean that this module will be loaded to the kernel, than we won’t be able to disable it, the Remote controller support will be load up as modularize feature which mean that we will be able to load or disable this module if needed, I unmark the Sound card which mean that this module are disable and can’t be use as a part of the kernel.

Now after we finish we need to save our configuration, this action will create some .config file that can be showed under our linux folder.

This file contain all of our configuration, you can also use make config, but this option will bring you a lot of questions that you must answer, and if you done any mistake you will need to start over, this is way the other option are recommended.

If you use make oldconfig it will take the configuration of the running kernel and load it up to your config file which can be very convenient if you want just enable or disable specific module.

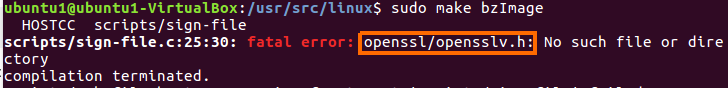

Now we want to make the compilation, so we need to run make bzImage, this going to take some time, this command will create for us the image file. Please remember that zImage are used for tiny file (512k) and bzImage are used for more larger files.

In my case when I tried to run make bzImage I got error about the openssl that can be found, after searching this error on google I found that I need to install libssl-dev which used for openssh development pkg.

I tried to run the make bzImage again and now it work, I saw few warning messages that complain about pkg that are missing but it keep the process to make the bzImage which going to be our kernel that contain the permanent modules for our operation system.

After it done to do it’s magic, the kernel will be at the arch/x86/boot/bzImage, and now we need to compile the modules, we can done it by using the command make modules and it also will take a mount of time.

At the end of this process it will run depmod which will create the modules.dep file which contain all the modules information and dependencies.

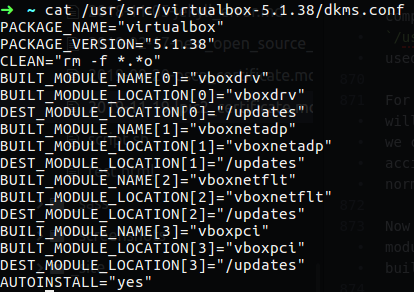

Now I want to talk little about the DKMS, this is sort of direction about how to build specific kernel module, this is mean that the DKMS contain the configuration file to tell the system how to build/configure the module and what its name is.

Let’s take a look in my local Ubuntu system, in the /usr/src/ directory I have module for virtualbox and that module use the dkms.conf file that contain direction for build the module in my system.

Figure 75-1 DKMS configuration file.

Figure 75-1 DKMS configuration file.

You can see that this file contain information about the package name, version, build module name, build module location and so on. As you already know for build module we use make command, it’s more likely that the module we going to install will be some c file and we use make to compile it, after it finish to do it magic we find in the current directory ko and o file and now we can run make install for installing the module, the DKMS can do all of that for us, we just need to specify what to do in the dkms.conf file.

Let’s go back to the make modules command we talk about erlier, so after the make modules finish we can find the modules we compile under the /lib/modules/ folder which will be contain the modules folder as the name of the kernel version we compile.

Now we need to install the modules with the command make modules_install it will install the modules under the /lib/modules/kernel-version which is the kernel version of our modules.

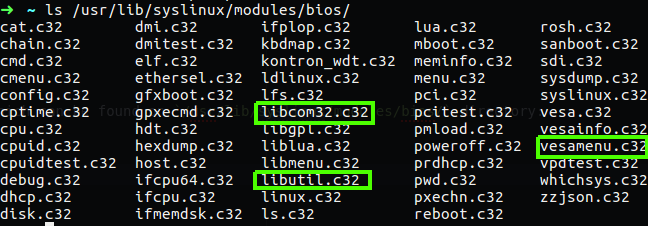

At the end of modules installation you will see that it run depmod which is build the list of every module and it’s dependencies, now all we need to run is make install, this command will install the kernel on our system and it use dracut which going to make some changes in our boot folder and in the GRUB to make some new option to load the new installing kernel so we can choose it one the GRUB menu, it also create the initrd which is minimal file that use in the RAM to load up the kernel.

Just think about that, you boot up your system and your GRUB need to load up your kernel, your kernel contain many modules for manage the devices parts, he need to load them up from the hard disk, but there is a problem, he can’t use the hard disk because he need module to do so and all of the module are in the hard drive, so for this issue there is the initrd, this file contain minimal modules that needed to load the hard disk for example, after that GRUB load the kernel it will be able to load more kernel modules from the hard disk.

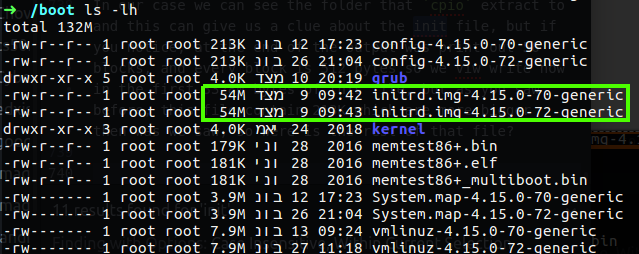

You can find the initrd under boot folder, usually every kernel have it’s own initrd, in my case my ubuntu contain 2 image of the kernel, one is 4.15.0-70 and the other is 4.15.0-72, the same numbering code have on my initrd files.

Please note:At boot time, the boot loader loads the kernel and the initramfs image into memory and starts the kernel. The kernel checks for the presence of the initramfs and, if found, mounts it as / and runs /init. The init program is typically a shell script. Note that the boot process takes longer, possibly significantly longer, if an initramfs is used.

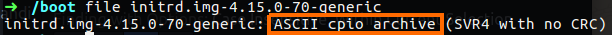

Let’s take a closer look at this file, it will help you understand more about this file, so I’m run the command file on one of the init file to check what is the type of that file, in that way I will be able to find out how to read that file.

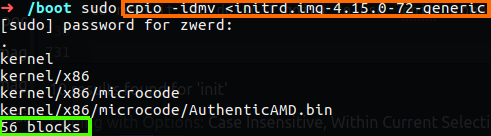

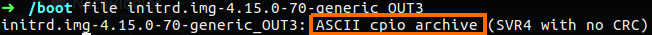

As you can see the type of this file is an archive and it’s cpio file which is compress, so now we know that we need to decompress this file in order to be able to read it, to do so I going to use cpio command.

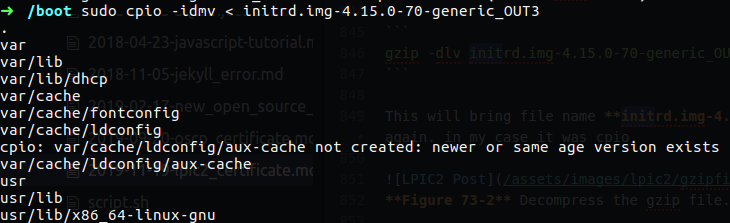

Figure 70 Extract from cpio file.

Figure 70 Extract from cpio file.

The option -i stand for extract files from an archive and the -d create leading directories where needed, the -m retain previous file modification times when creating files and -v used for verbose.

In our case we can see the folder that cpio extract to and this can give us a clue about the init file, but if you notice, at the end of the output was print out 56 blocks, and every block is 512 bytes so we view right now in the first 33280 bytes of this file, but as you saw before, this file contain 54M which are more bigger then 33K, so were is the rest of that file?

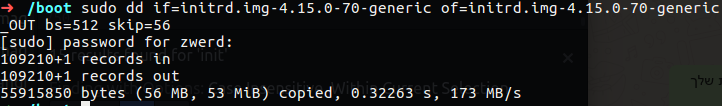

This situation let’s us know that not all the file was open by the cpio, and the rest of that can be something else or new cpio file because in the cpio there is an header that he knows the start and finish of file, so we need the rest of the file, to achieve that goal we can use dd to take fixed size for that file and output it to use file.

1

dd if=initrd.img-4.15.0-70-generic of=initrd.img-4.15.0-70-generic_OUT bs=512 skip=56

In this command I specified the input file which is the initrd.img-4.15.0-70-generic and the output file going to be initrd.img-4.15.0-70-generic_OUT, the block size are 512 bytes and we want to skip the first 56 blocks which mean the rest of that file will be taken.

Figure 71 Create new file using dd.

Figure 71 Create new file using dd.

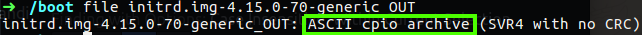

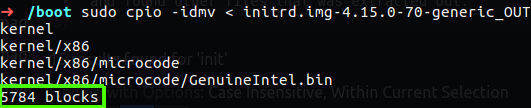

What we need now is to use file again to see what is the type of our new file we have.

So this also cpio file, I used cpio to extract that file and found other files that was extracted out.

Figure 73 Extract again using cpio.

Figure 73 Extract again using cpio.

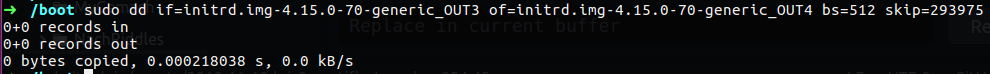

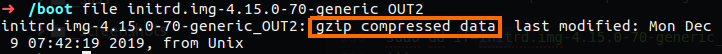

Now we need to repeat the process again with dd and after that using file command.

1

sudo dd if=initrd.img-4.15.0-70-generic_OUT of=initrd.img-4.15.0-70-generic_OUT2 bs=512 skip=5784

Figure 73-2 Checking the new file with

Figure 73-2 Checking the new file with file command.

You can see that we found gzip file so now we need to use gzip to see the contect of that file, in the gzip case the file must contain extension of gzip else we will get some error, so I use mv to change the extension and then use the gzip command.

So for change the extention:

1

sudo mv initrd.img-4.15.0-70-generic_OUT2 initrd.img-4.15.0-70-generic_OUT3.gz

And now extract the gzip file:

1

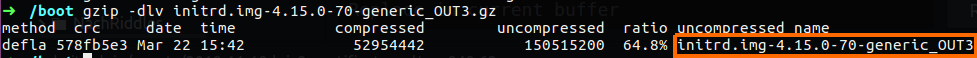

gzip -dlv initrd.img-4.15.0-70-generic_OUT3.gz

This will bring file name initrd.img-4.15.0-70-generic_OUT3 so now we need to check that file type again. in my case it was cpio.

Figure 73-2 Decompress the gzip file.

Figure 73-2 Decompress the gzip file.

Figure 73-3 Checking the file type again.

Figure 73-3 Checking the file type again.

So I decompress it.

Figure 74 Extract again using cpio.

Figure 74 Extract again using cpio.

To see if this is the end of our search of initrd we can can use dd and if he print out record of zero, it’s mean that this is it.

Please nore: the kernel configuration parameters can be found on the config file, this file is also on the boot folder, so as you need to remember that you can change some parameter by make menuconfig but if you forget about some setting that you need you can add it in that file before install the kernel. Also remember that the config name is usual as follow config-<kernel version>.

So far we saw that on GRUB we have two files, the kernel file and the init file, after we done all the compiling stuff we can find the kernel and it’s documentation under the /usr/src/linux//usr/src/linux/documentation, we also can find there the drives that going to be in used on our system and the configurations files that related to this linux kernel.

For making initrd file we can use mkinitrd command (on RedHet and CentOS distro), if you use Ubuntu you will need to use update-initramfs or mkinitramfs commands, those command will create initrd file which we can move to our boot folder and update the GRUB to use it, so in case you find the initrd dummege or it accidentally deleted, you can use those command and create your new initrd file for booting the system normally.

Challenge 201-1

- Create your minimal linux kernel and archive it as iso file (you can use the minimal linux live project).

- Run the file on virtual machine and check if it working correctly.

- check if you have network activity, if you haven’t, try to solved it and check connectivity on your local lab.

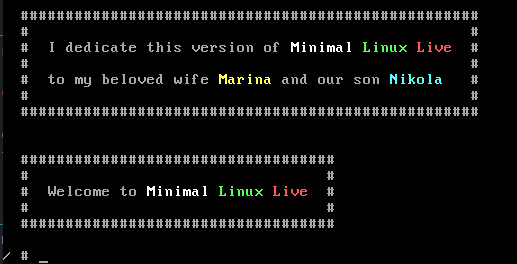

To solve this issue I will go with you step by step how to make new minimal linux kernel, I am going to use the minimal linux live project that was written by davidov.i@gmail.com, you can find the minimal linux document at the following URL:Minimal Linux Tutorial.

1. Create minimal linux kernel.

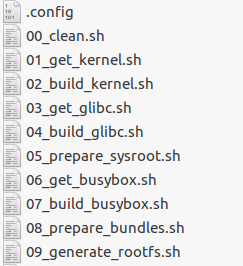

So first of all I login to the following URL:https://minimal.linux-bg.org/, I download the file minimal_linux_live_15-Dec-2019_src.tar.xz, I extract the file by using tar -Jxvf and folder with the same name was created on the local directory which are contain the script file for making new ios image, the name of that file is build_minimal_linux_live.sh which is executable file.

By running this file we run all of the script that exist in that folder, like 02_build_kernel.sh and 05_prepare_sysroot.sh.

while running that script, on my terminal I saw what it did which going to create new file and setup the .config file and make it and create initramfs which going to be use under the iso file which I am going to have after that script will done it magic, and this take long time to cook, but on the second script 02_build_kernel.sh you can see that we use mrproper to clear the local config file and after that we going to create new config file base on the kernel.config file.

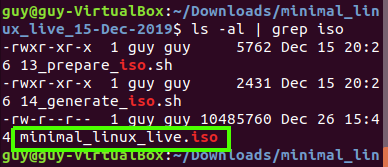

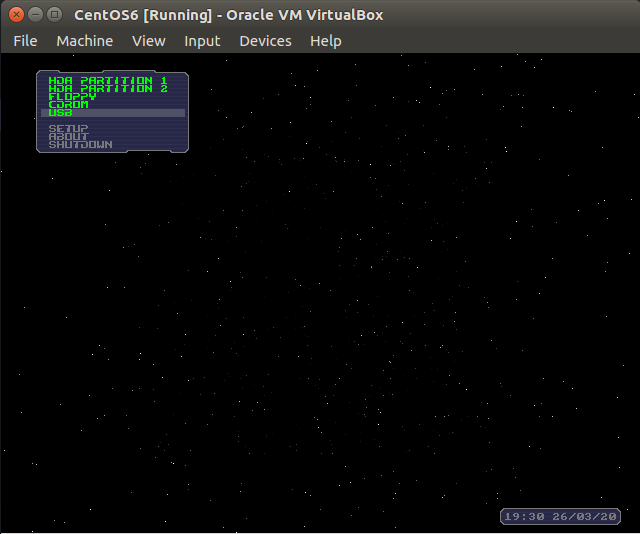

Now we need to find the iso file which we going to use on our virtual machine, in my case I have vbox to I am going to create new machine with that file image and load the machine up.

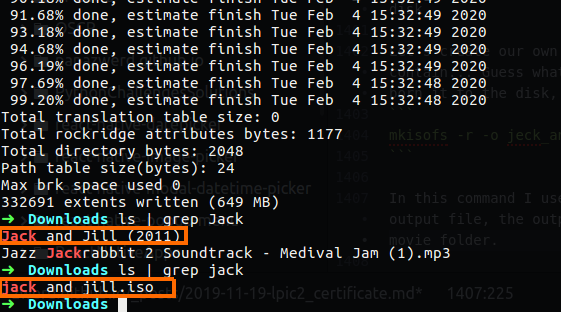

The iso was created on the current directory named ./minimal_linux_live.iso, I found that by using the follosing command.

1

find . | grep "\.iso"

Now I need to transfer that to my local machine, I not going to use usb or any sort of device, I am going to run nc again and transfer the file over my local network.

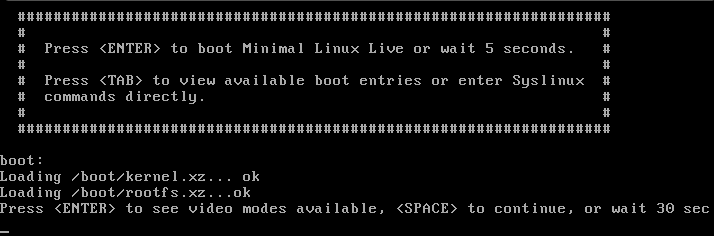

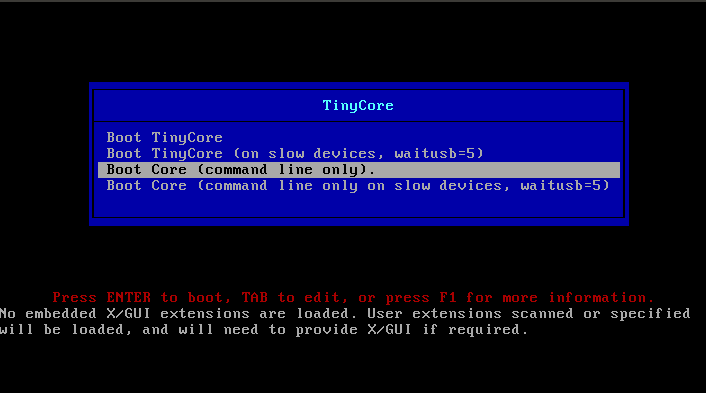

2. load up the iso file in the vm machine

So now I create new machine and set my iso file as the disk to load it at boot time.

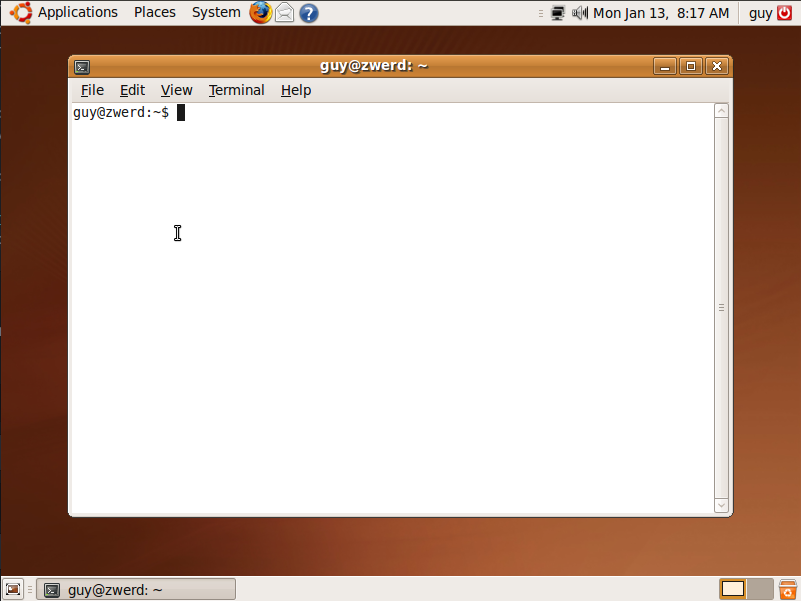

You can see that it start to boot up, and I need is to wait and see if it going to bring me some minimal linux environment with tools to work with.

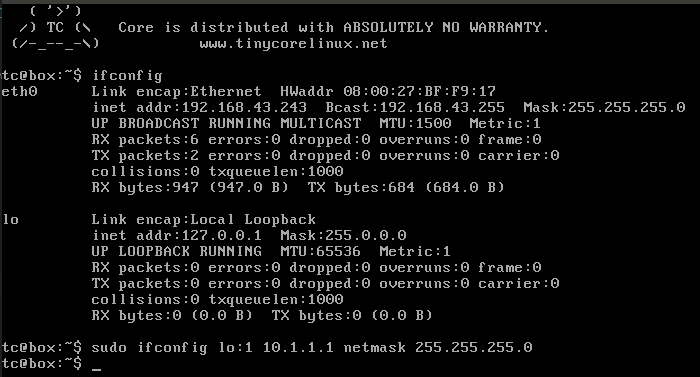

It’s look good and I succesfully run some bash commands. I also have network interface with IP address that he get by DHCP.

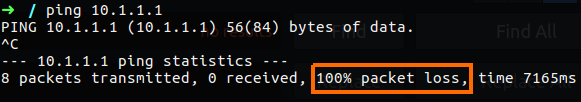

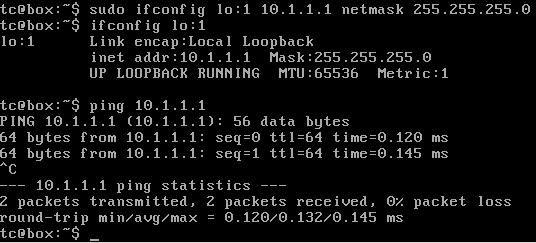

3. check network connectivity.

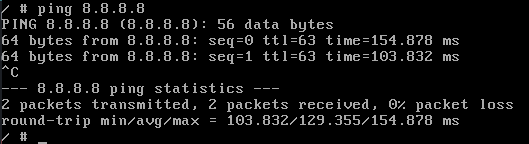

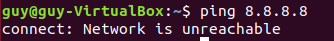

I just run ping to 8.8.8.8 which is google DNS server and I saw reply from this server.

Figure 80 Checking network connectiviry.

Figure 80 Checking network connectiviry.

So we finish our challenge, so we can proceed foreword to the next chapter.

Challenge 201-2

This time we going to do something that can help us in real world scenario. It’s not something that can happen on a daily basis, but it seems to be a good exercise.

- Setup CentOS on virtual machine and after full installation on your virtual hard disk verified the initrd file.

- Go to the GRUB file and remove the initrd line.

- Remove the initrd file and reboot the system.

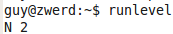

- After you found out that the system can not start, find the way to soled it

1. setup CentOS on VM and verified the initrd file

So, I download the file from CentOS site, I chose the CentOS 6 for that exercise but you can do the same on more advance system.

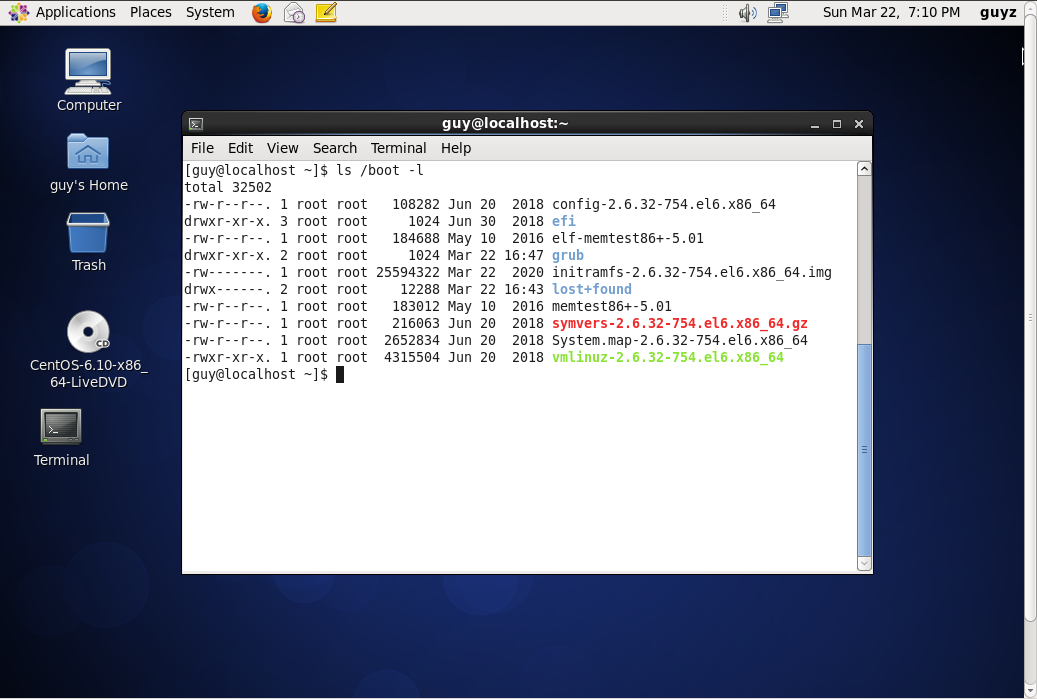

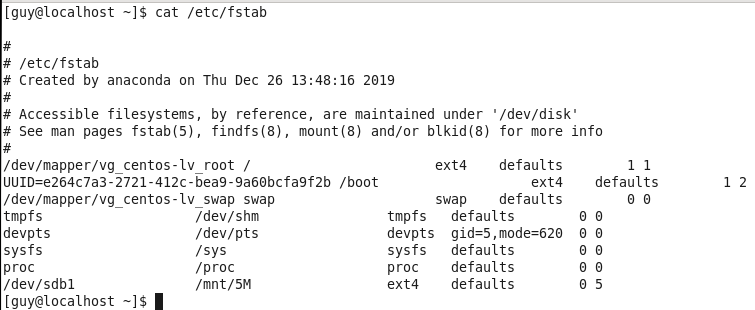

In the first step I make new virtual machine and load it up with the ISO file of the CentOS. After it cam up I start the installation and wait for it to finish. After that I restart the system and load it up from the virtual hard disk and checking the system, I found the initrd on the boot folder.

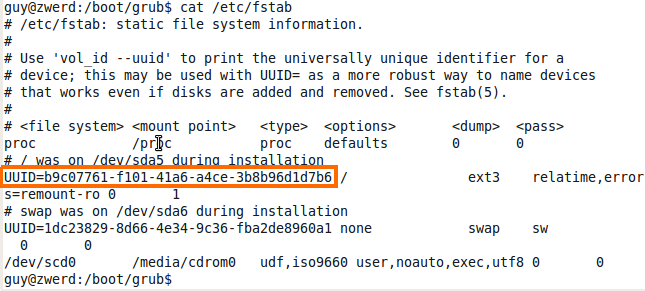

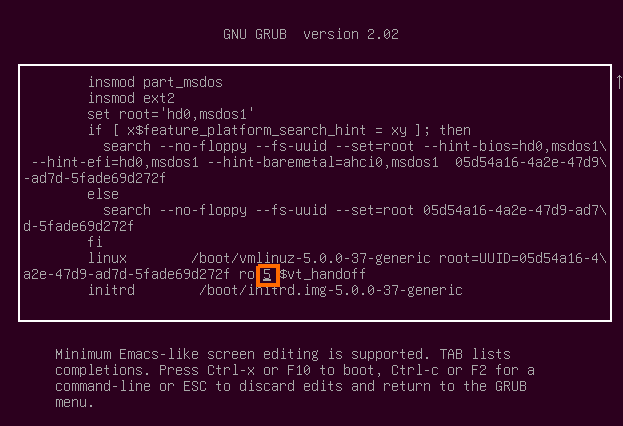

2. Remove the initrd line from GRUB

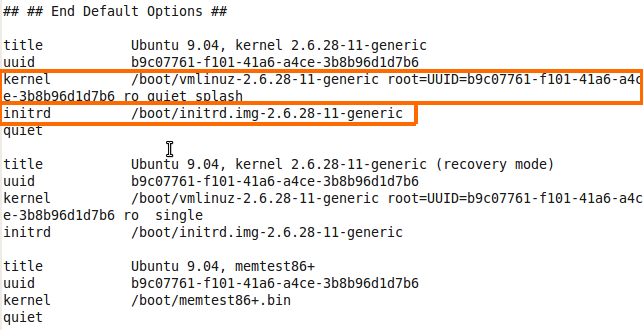

You can see the I have folder named grub which is contain the GRUB configuration on my local system, I need to change it and remove the the line that contain the initrd, this line is used to load up some minimal modules to load the hard disk.

I remove the line by using vim, you can also use nano if vim is difficult for you but remember that knowing to use every tool in Linux will help you as you proceed foreword.

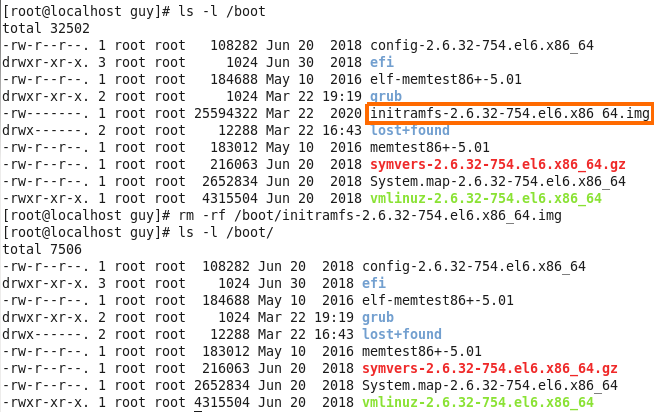

3. Remove the initrd file

Now I need to remove the initrd file from my boot folder, so I use the rm -rf, but before that I verified the file on the boot folder to see that is exist.

Figure 81-2 Remove initrd file.

Figure 81-2 Remove initrd file.

4. Reboot the system and fix the issue

Now this is time to reboot the system to see if it can boot up normally. In my case it stack on black screen without any way to stop the start process, so I power off the system.

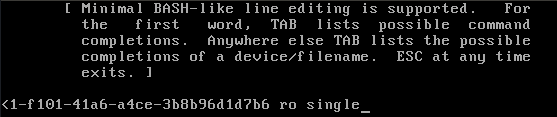

So let’s say that your friend can’t boot up the system and he knows that you are an expert in everything that related to Linux so he ask for help, in that case we can see the line that said:

1

kernel panic ... can't mount root fs ...

In that case we know that the kernel tried to load up the root filesystem and he tried to do so with the initrd, so if we get such an error it because he cannot find the initrd file.

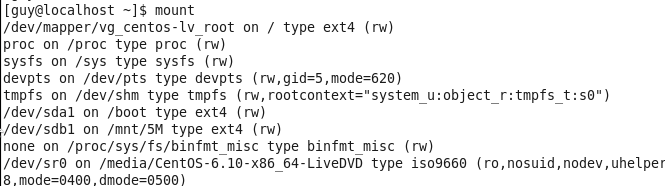

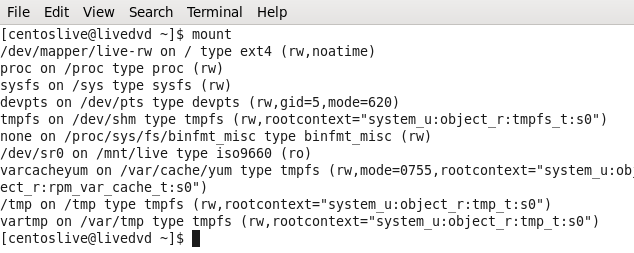

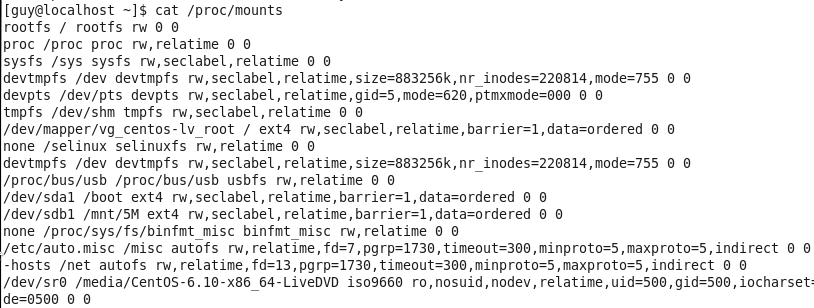

To solve that I boot up the system by using external device like bootable usb or optical disk and bring the system up for making change in the boot folder for that system.

Figure 81-4 Mount command after boot from bootable device.

Figure 81-4 Mount command after boot from bootable device.

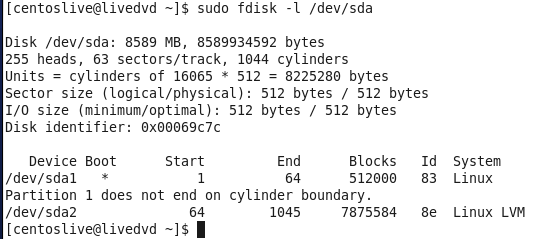

You can see the I have not any /dev/sda storage in that system becouse I boot it up from optical disk, now I need to find the hard disk and mount it to my system.

Figure 81-5 My local hard disk using fdisk.

Figure 81-5 My local hard disk using fdisk.

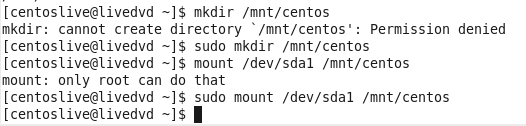

Now after I know what is my hard drive I need to create mount point, which is in my case going to be /mnt/centos/ folder and I create that folder by using mkdir, after that I need to use mount for mounting the hard drive to that point.

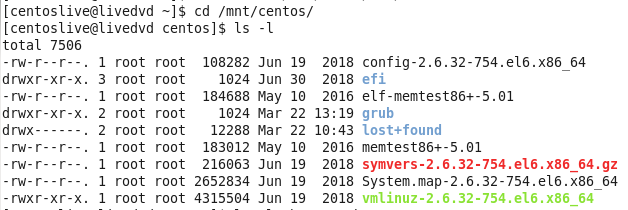

You can see that I had to run it with root privilege for making the mount point and mount that drive, now I need to see what I have on that folder.

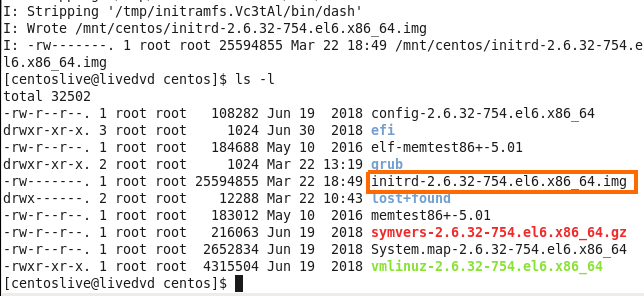

Figure 81-7 My centos boot folder.

Figure 81-7 My centos boot folder.

So I need to create new initrd file, I can do so with the mkinitrd command, in my case I want to specified the kernel version and I know that my bootable optical disk is in the same version so I use the uname -r which bring me the version of the system and create the file with that system code number.

1

mkinitrd -v ./initrd-`uname -r`.img `uname -r`

You can see that the image was successfully created.

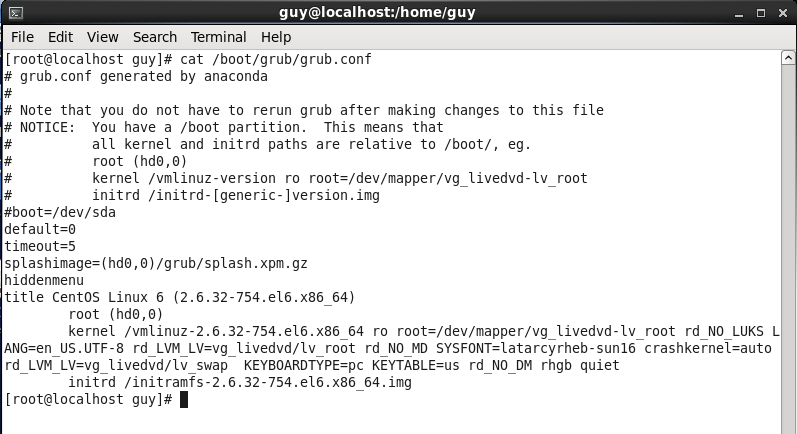

Now I need to update the grub file, on my system I use GRUB1 so I have not any automatic way to update the grub, I need to do so manually.

So I use vim to edit the GRUB file and I add the initrd line which contain the path to that file.

1

initrd /boot/initrd-2.6.32-754.el6.x86_64.img

After that I reboot the system to check if it working right.

Chapter 2

Topic 202: System Startup

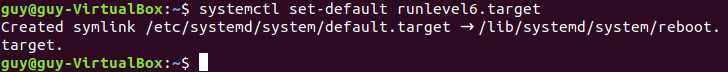

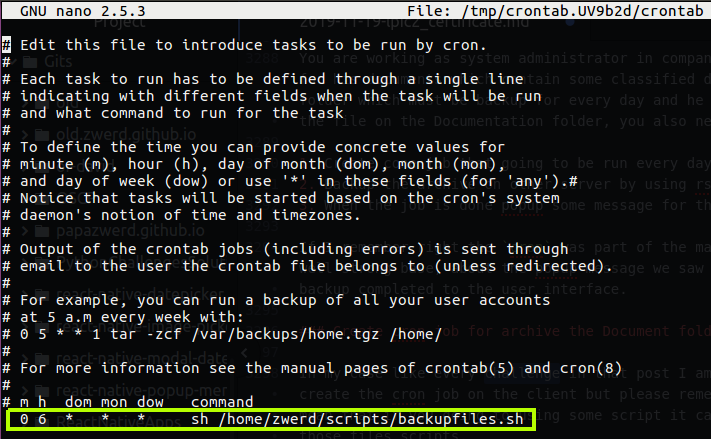

In linux systems we have the way to control the system mode we going to load up, we have numbers of system mode that some of them very useful and some of them are not in use, as example one of them is full system mode that contain everything that normal system need contain for work, we have also single user mode that can be use to do specific thing on the machine, that sort of control tool called runlevel. In the Linux world we can use run level to boot up specific mode of our operation system.

We can setup also runlevel as we want, as example we can create runlevel that enable on the system the mail service and disable apache2, in that way we can customize the system with specific tools and program that can be work on it, this is quite useful because let’s say you have user on your organization that use tools that related to design pictures or document and he need connectivity to the network and nothing else, in that case why we allow the vsftpd service for example, this is not useful for that user, so we can disable that on customize runlevel.

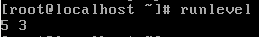

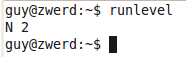

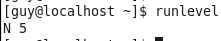

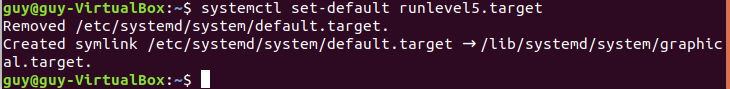

If we talk about systems like Red Hat systems in my case centOS you can run runlevel, this command will show us the runlevel that our system run for, in my case it was N 5.

Figure 82-1 My runlevel on centOS.

Figure 82-1 My runlevel on centOS.

The 5 means that we working now with runlevel 5, the N means that the previous runlevel was none, if we change the run level for 3, the numbers will be 5 3. To change the runlevel on the running machine we can use the telinit.

1

telinit 3

You can see that I am in command line level, so in that case I can change it again to level 5 and it will bring up the GUI environment again, you can see now that if I run runlevel command I can see that the last runlevel was 3, and now it set on level 5.

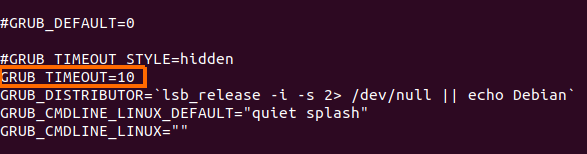

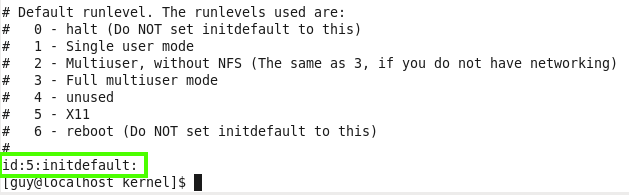

Now let’s look on the configuration file of that inittab, you can find that file on /etc/inittab, this configuration file contain several init level. 0 - halt which mean that if we use that init, the system will go shutdown, this is why it is recommended not to setup the runlevel at this init level or at level 6, 1 - single user mode for administrative tasks, 2 is multiuser mode without NFS and 3 is fill multiuser mode, 5 is x11 which have the nice GUI view with desktop environment.

Figure 84 inittab file on centOS.

Figure 84 inittab file on centOS.

You can see on the bottom of that file the line id:5:initdefault:, we can change it value to what init level we want and on the next boot it will bring the new init level up, what you mast not do on that file is to setup the run level for 0 or 6 which cause your system won’t be able to load the user enviroment and we can’t work in that case.

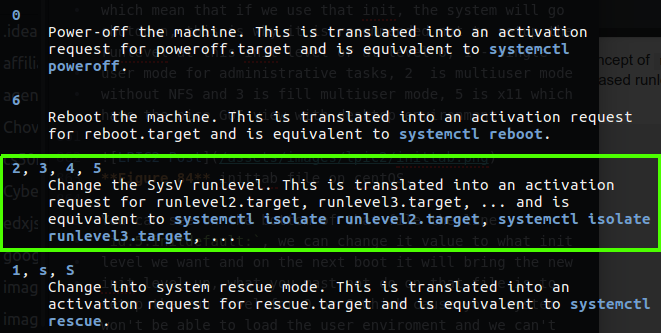

If you want to see your level on Debian like as Ubuntu you can also run runlevel command or check your sysinit.config file on /etc/init.d folder this file contain the init level for distribution like Debian and you can change it what ever you like but please notice that this run level in my case on Ubuntu is pretty different from Red Hat like distrabution, in my case I found that information in the man page of telinit file.

Figure 85 Runlevels on Ubuntu.

Figure 85 Runlevels on Ubuntu.

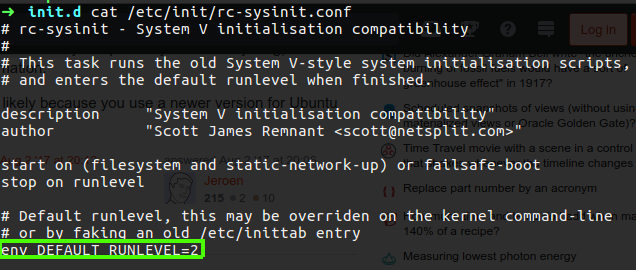

You can see that the most used are 2, 3, 4, 5 and they call it SysV instead of runlevel. You also can see the current runlevel you run on Ubuntu on the rc-sysinit.conf file.

Figure 86 rc-sysinit.conf file on Ubuntu.

Figure 86 rc-sysinit.conf file on Ubuntu.

Please note:we can set the runlevel also on the boot loader which in my case is the GRUB and we can specify the RUNLEVEL we want on the menu.lst and run grub-install and every time the system will reboot it will use this RUNLEVEL.

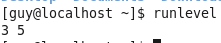

Let’s go back to centOS machine, we have folder called rc.d which contain the folders for each runlevel, as example the rc3.d is conatin simbolic link of the utility that going to be active on that run level.

Every simbolic contain in it’s name sign for it’s operation on that system, as example as you can see the first one utility is K01smartd the K stand for KILL which mean that this smartd will kill down if we change the current run level to number 3 on flay, the numbering is the sort of every utility, which mean the system will go every utility and take care of it, in my case the smartd will killed first and oddjobd is the second one service that going to be kill.

We also have service with their name contain S which stand for start, in that case the system will go one by one and start every such service, so as you can see we can change specific runlevel and disable it’s working services or enable others by just chaning the name of the simbolic link, as example in the case of K01smartd.

1

mv K01smartd S01smartd

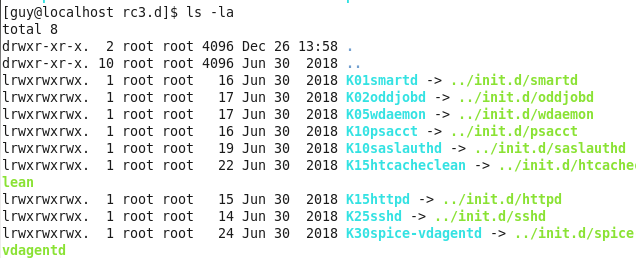

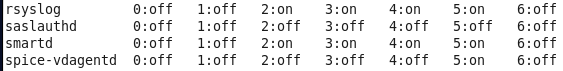

In that case if I switch to run level 3, I will be able to use smard service. You may also notice the numbering on every service, this number are allow us to choose the order of execute every service under this run level, We also change use tool to do that task chkconfig, if you run chkconfig --list it will bring you the list of every service and it’s operation state of every run level.

Please note: this chkconfig command is available on operation systems like RedHet, CentOS etc.

In my case I run the following command.

1

chkconfig smartd on

This will bring the smartd to be active in run level 2 - 5.

Figure 89 list on chkconfig - smartd are active.

Figure 89 list on chkconfig - smartd are active.

To change this service mode back you just need to use off option.

1

chkconfig smartd off

On Ubuntu the rc folders can be found under /etc and the concept is the same as we saw on centOS, the difference is on the change mode utility, in Ubuntu it’s update-rc.d and it’s do the same as we done with chkconfig.

1

update-rc.d dnsmasq start

If you want to change the operation state permanently you need to use disable or enable instead start or stop. You can also remove it from the symbolic links with the remove option.

So far we saw the command chkconfig --list that can help us to view all the services on centOS system or any Red Hat like distribution, on Ubuntu we can use the command netstat -tulpn as we saw on chapter 1, but for the matter fact we can use more handy command like service --status-all, this command will show us every service that exist on our system and each status.

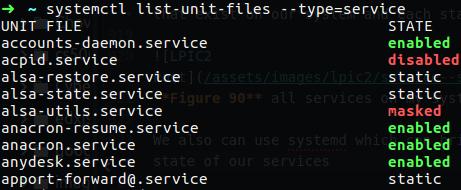

Figure 90 all services on my system.

Figure 90 all services on my system.

We also can use systemd which can bring us more clear state of our services, all we need to do is to run systemctl.

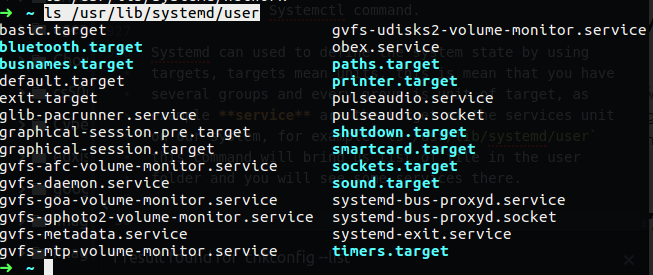

Systemd can used to define the system state, we have extensions named .target or .service and etc, for example ls /usr/lib/systemd/user this command will bring us list of file in the user folder and you will see some services there.

Figure 91 systemd user command.

Figure 91 systemd user command.

On figure 90 you can see that some services are enable and some of them are disable, the services that are on static state means that they have some service that they depend on, so if you want to kill that service it’s can’t be done until we will stop the depended service.

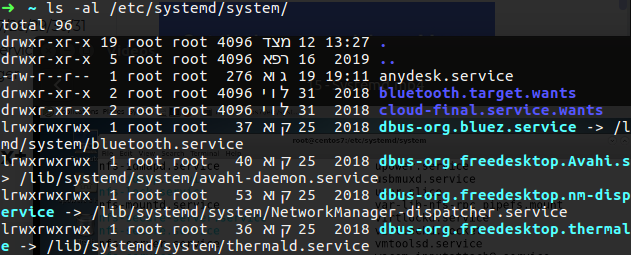

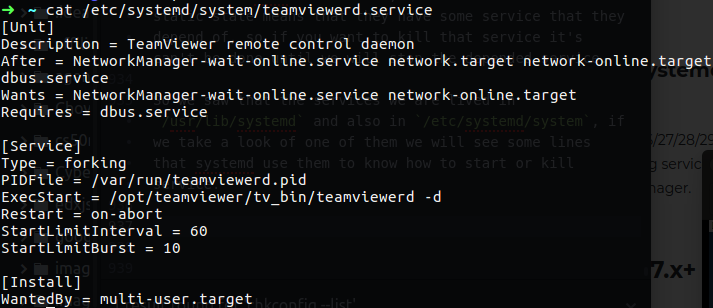

So we saw that the services are lived in /usr/lib/systemd and also in /etc/systemd/system, if we take a look on one of them we will see some lines that systemd use them to know how to start or kill service.

Figure 92 the service itself on system.

Figure 92 the service itself on system.

As you can see this file contain some description and after field, this after field mean that the services - NetworkManager-wait-online.service network.target network-online.dbus.service, must be on enable state and only after that was done, we can bring the teamviewerd.service service up, so in that case the dbus.service must to be run before we can be able to use teamviewerd service, so if we run the following command we will see that dbus is on static as we saw on figure 90:

1

systemctl list-unit-files --type=service | grep dbus

you can see also the ExecStart field that contain the exact command for running that service.

There is also a third location available in which you can place files to override your unit definitions, this is the /run/systemd directory. I want to do some experiment (credit filbranden) We have the /run/systemd directory that contain the systemd notify, as much as I understand this norify allows a process to send a notification to another process, so let’s create our own service and look about the status of that service.

First of all we create a script like the one below somewhere in your system, such as /usr/local/bin/mytest.sh:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

#!/bin/bash

mkfifo /tmp/waldo

sleep 10

systemd-notify --ready --status="Waiting for data…"

while : ; do

read a < /tmp/waldo

systemd-notify --status="Processing $a"

# Do something with $a …

sleep 10

systemd-notify --status="Waiting for data…"

done

We use sleep 10s so we can see what’s happen, when watching the systemctl status mytest.service output. Now we need to make the script executable:

1

$ sudo chmod +x /usr/local/bin/mytest.sh

After that we need to create /etc/systemd/system/mytest.service, with contents:

1

2

3

4

5

6

7

8

9

[Unit]

Description=My Test

[Service]

Type=notify

ExecStart=/usr/local/bin/mytest.sh

[Install]

WantedBy=multi-user.target

Now we need to reload systemd (so it learns about the unit) and start it:

1

2

$ sudo systemctl daemon-reload

$ sudo systemctl start mytest.service

Then watch status output, every so often:

1

$ systemctl status mytest.service

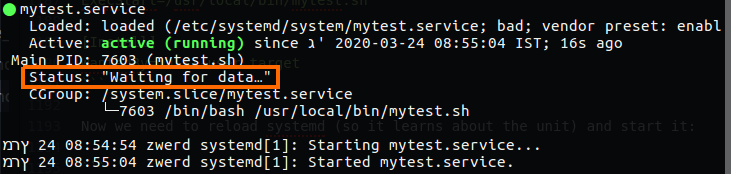

Figure 92-1 My test service is on active state.

Figure 92-1 My test service is on active state.

You’ll see it’s starting for the first 10 seconds, after which it will be started and its status will be “Waiting for data…”. Now write some data into the FIFO (you’ll need to use tee to run it as root):

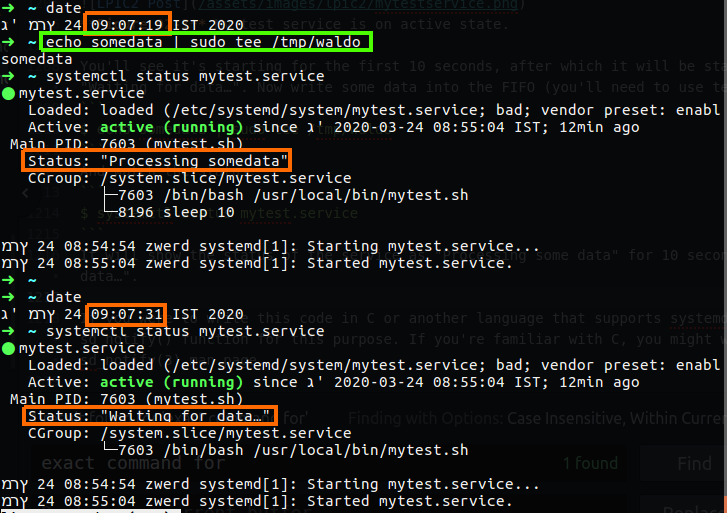

1

$ echo somedata | sudo tee /tmp/waldo

And watch the status:

1

$ systemctl status mytest.service

It will show the status of the service as “Processing some data” for 10 seconds, then back to “Waiting for data…”.

Figure 92-2 My test service status.

Figure 92-2 My test service status.

If you check, you will find that most of the services are symbolic link to other location and most of them are for /lib/systemd/system.

If we will use the list-units option in systemctl we will may see some services that was failed on the boot time or later, in this case if that service are importance we can bring it up by start it, or enable it.

For summery, this systemd with the systemctl command is another way to check and set the init files, this is also applied on many linux system like Red Hat and opensuse servers for enterprise, there is some distribution that don’t use systemd, but this is only on the desktop linux version, it’s more likely to find systemd in used on enterprises systems.

We also need to be familiar with systemd-delta, it may be used to identify and compare configuration files that override other configuration files. Files in /etc have highest priority, files in /run have the second highest priority, …, files in /usr/lib have lowest priority. Files in a directory with higher priority override files with the same name in directories of lower priority. In addition, certain configuration files can have “.d” directories which contain “drop-in” files with configuration snippets which augment the main configuration file.

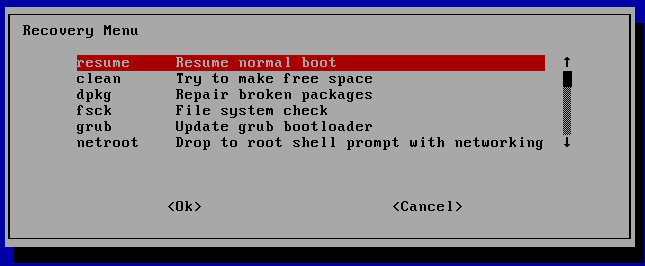

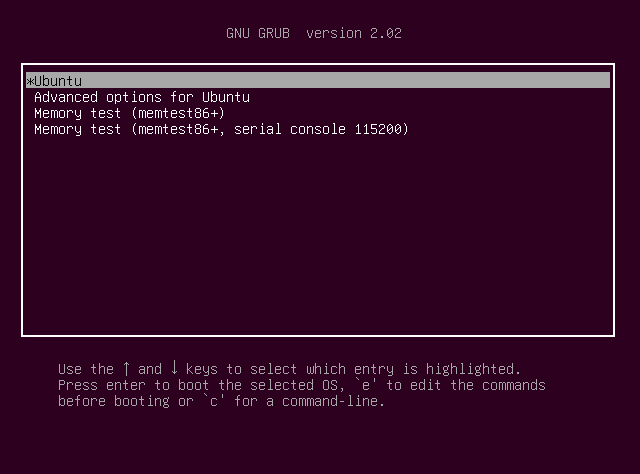

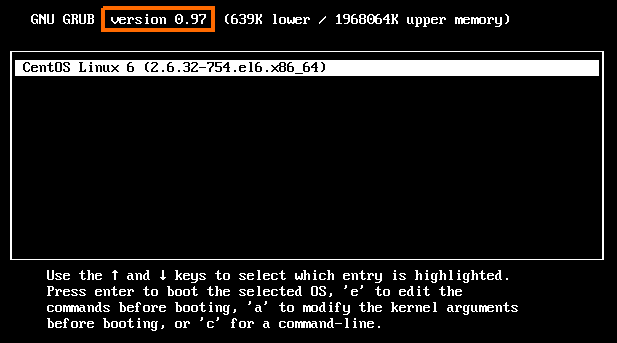

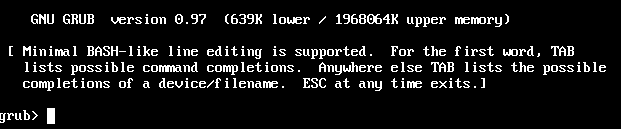

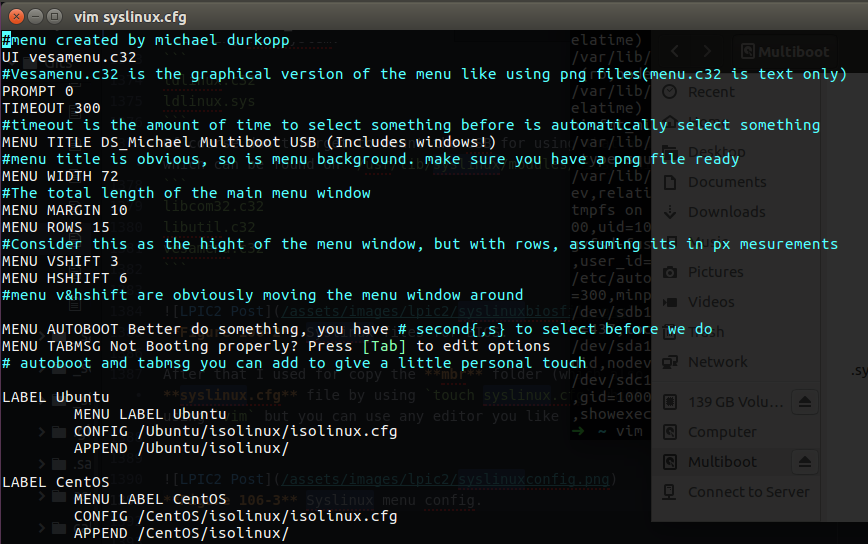

Now, for this chapter 2 we need also be familiar with GRUB legacy and GRUB2, this GRUB stand for GRand Unified Bootloader, this is bootloader which mean this is the first menu that the computer bring up, GRUB is a modular bootloader and supports booting from PC UEFI, PC BIOS and other platforms, in this menu we can choose what operation system we want to load up, let’s say that you have some DELL PC and you want that one partition will be Windows and the other contain Linux, you can do so and the GRUB is the menu which bring you the option to choose between the OS’s.

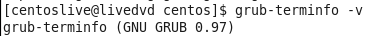

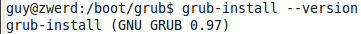

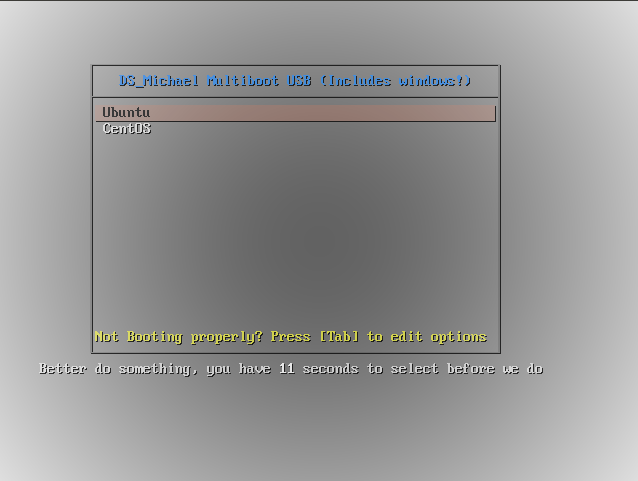

You must also be familiar with the different between those two, GRUB legacy is the first version of that GRUB project and on the most linux version you may see that GRUB is on version 0.97 like as follow in centOS (version 6)

Figure 94 GRUB 1 which is the GRUB legacy.

Figure 94 GRUB 1 which is the GRUB legacy.

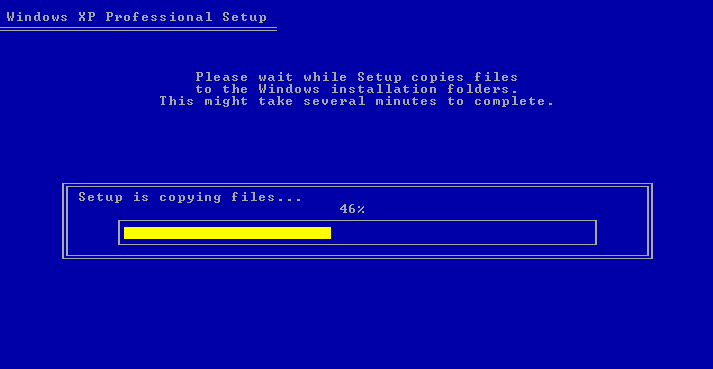

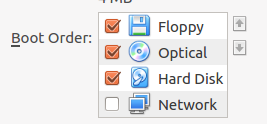

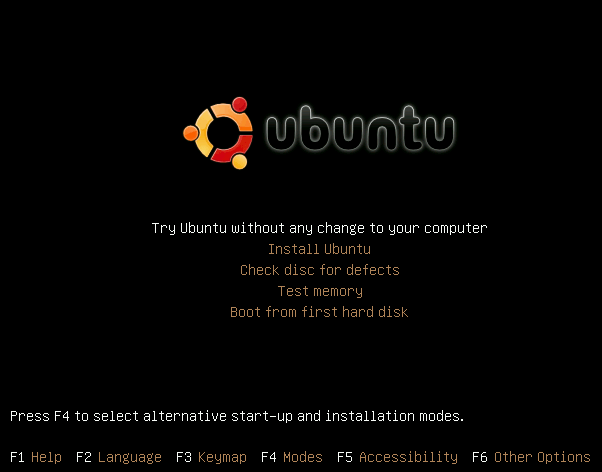

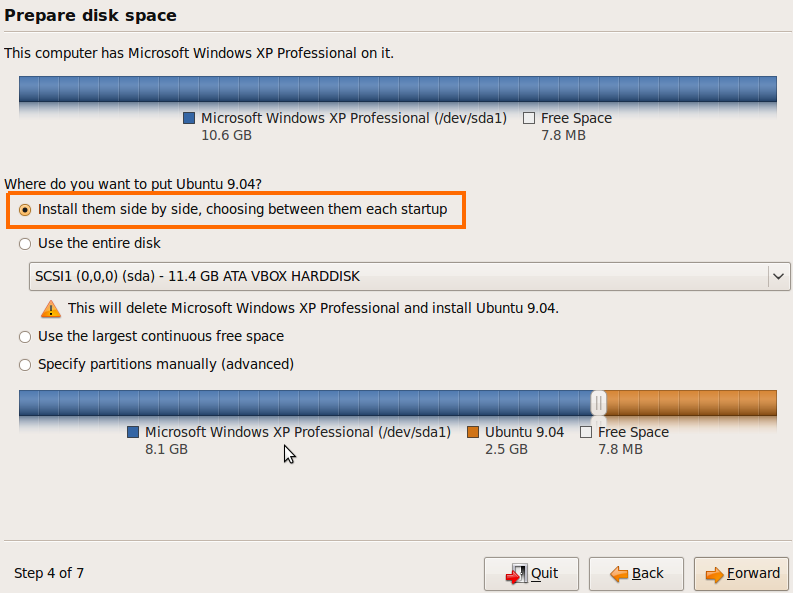

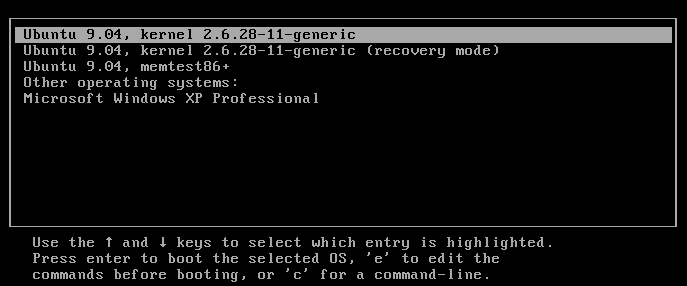

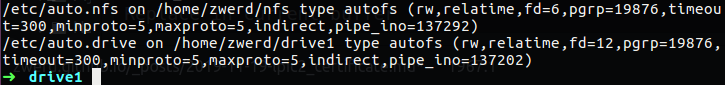

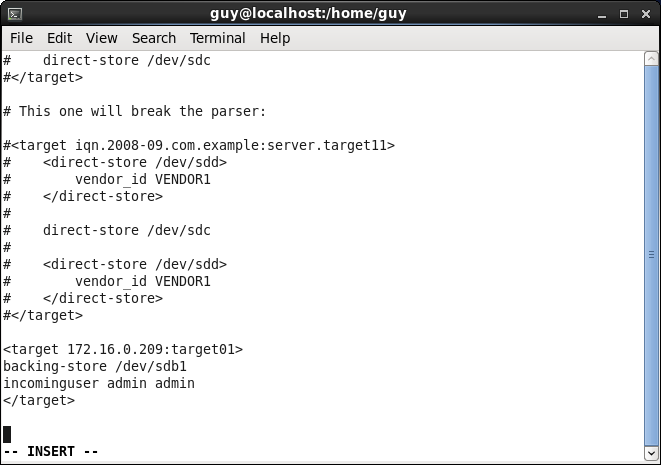

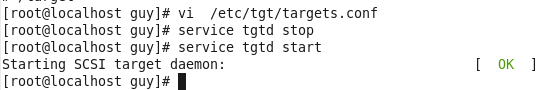

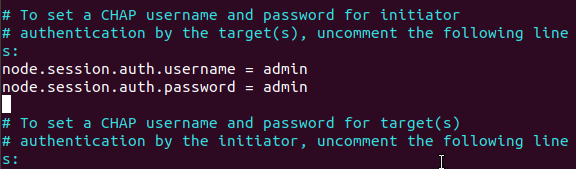

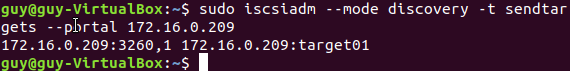

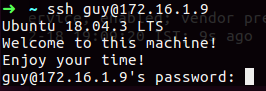

You can see that in my case the GRUB menu doesn’t contain any other option except centOS 6, I want to show you how this is done, so for that case I download Ubuntu 9 which contain GRUB legacy, I also will install Windows XP on my virtual machine and I will create two partition which one of them will contain the Linux and the other will be Windows, please note that windows contain some different boot loader but this is beyond the scope of our LPIC2 exam.